To provide a valuable blend of insights from both the UX community and esteemed UX experts, we have chosen to divide our in-depth exploration into two parts.

The first was asking 11 industry experts for their opinions on the following 4 topics.

- AI in UX Research: Benefit or Detriment?

- What aspects of UXR are best compatible with using AI?

- Can UX Researchers Stay Marketable w/o Adopting AI?

- Thoughts on AI Participants / AI generated responses

In parallel, we prepared a survey that we distributed in UX communities and our audience with an aim to answer questions such as:

First and foremost we asked UX researchers about their opinions on the relationship between AI and UX researchers. However, since the researchers are not the only experts who partake in UX research, we decided to also survey other professionals such as other People Who Do Research (PWDRs) such as strategists, people who come into contact or work in tandem with researchers (e.g. ResearchOps, Designers) and people who have to decision making authority over research (e.g. Product Managers) as we believe their opinions and perceptions are important and will contribute in shaping the state of the AI in the industry as a whole.

In the end, these people are also those, who often play a key role in the choosing of the tools their team/company will use, they have the budget responsibility and are often the target market of tools, that promote the wide use of AI, especially on the grounds of budgetary savings.

Key takeaways

➡️ Overall Perspective:

- Most UX professionals see the potential of AI in UX research particularly in: large-scale data analysis, insight extraction and simulated ideation partner.

🤖 General Sentiment:

- Respondents generally have a positive attitude toward using AI in UX research.

- Interestingly, the attitude doesn’t vary significantly based on their position or seniority in the field.

- The biggest motivators behind including AI in the UX research process are the potential to increase efficiency and reduce costs.

- The attitude towards AI isn’t wholly positive. The biggest detractor from using AI responses is simply that they are not human (lack of attributes, behavioral patterns and sentiments unique to human respondents).

💡 Adoption Rate:

- 95.3% of the participants either already included AI in their UX research at some point or are open to trying it out down the road.

- 66.4% of respondents currently utilize AI in UX research and plan to continue doing so.

- 20% haven’t adopted AI in their research process.

🔎 Specific Applications:

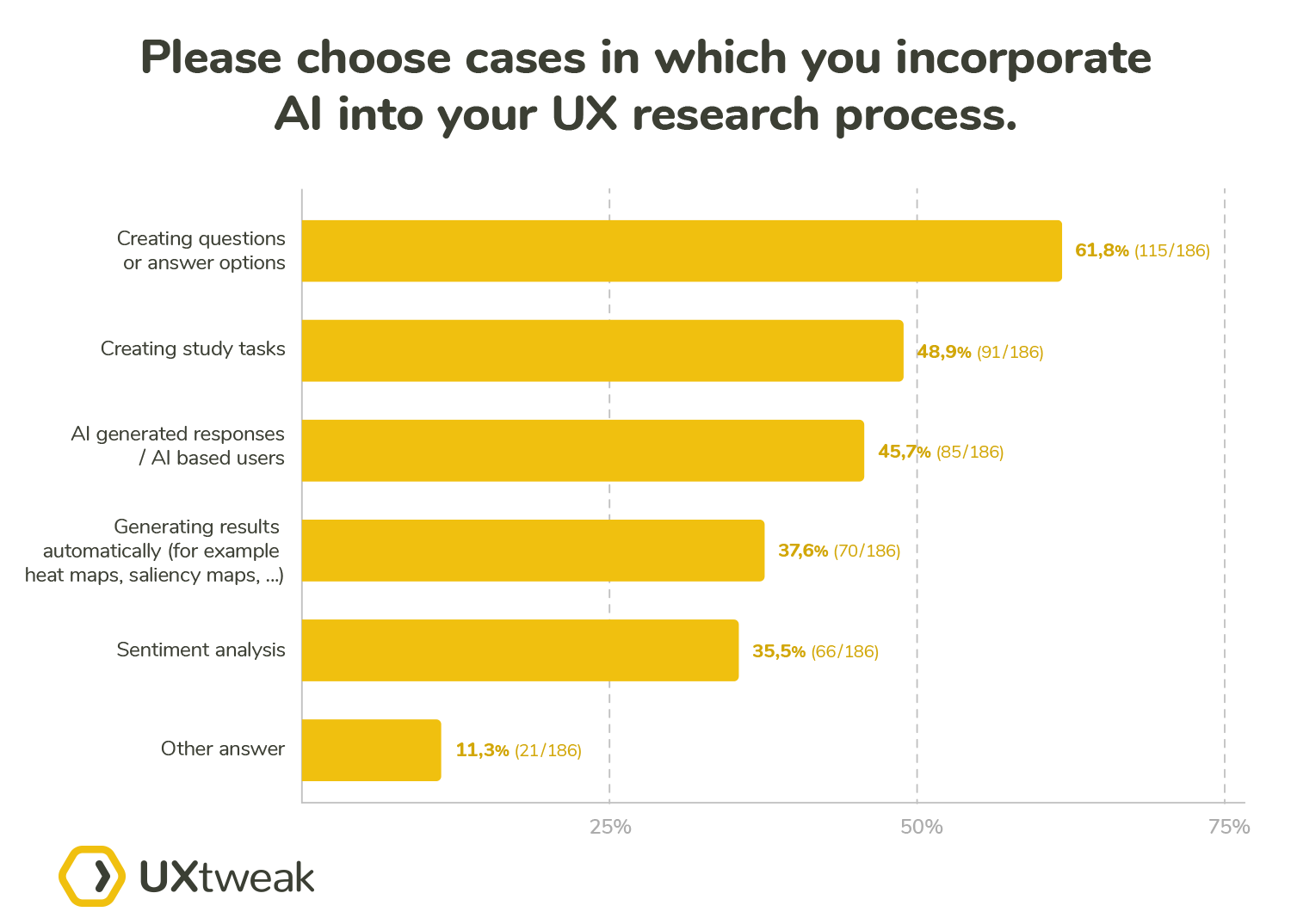

- The most common application with 61.8% is using AI to generate questions or answer options. The second most common application is creating study tasks as answered by 48.8% of participants.

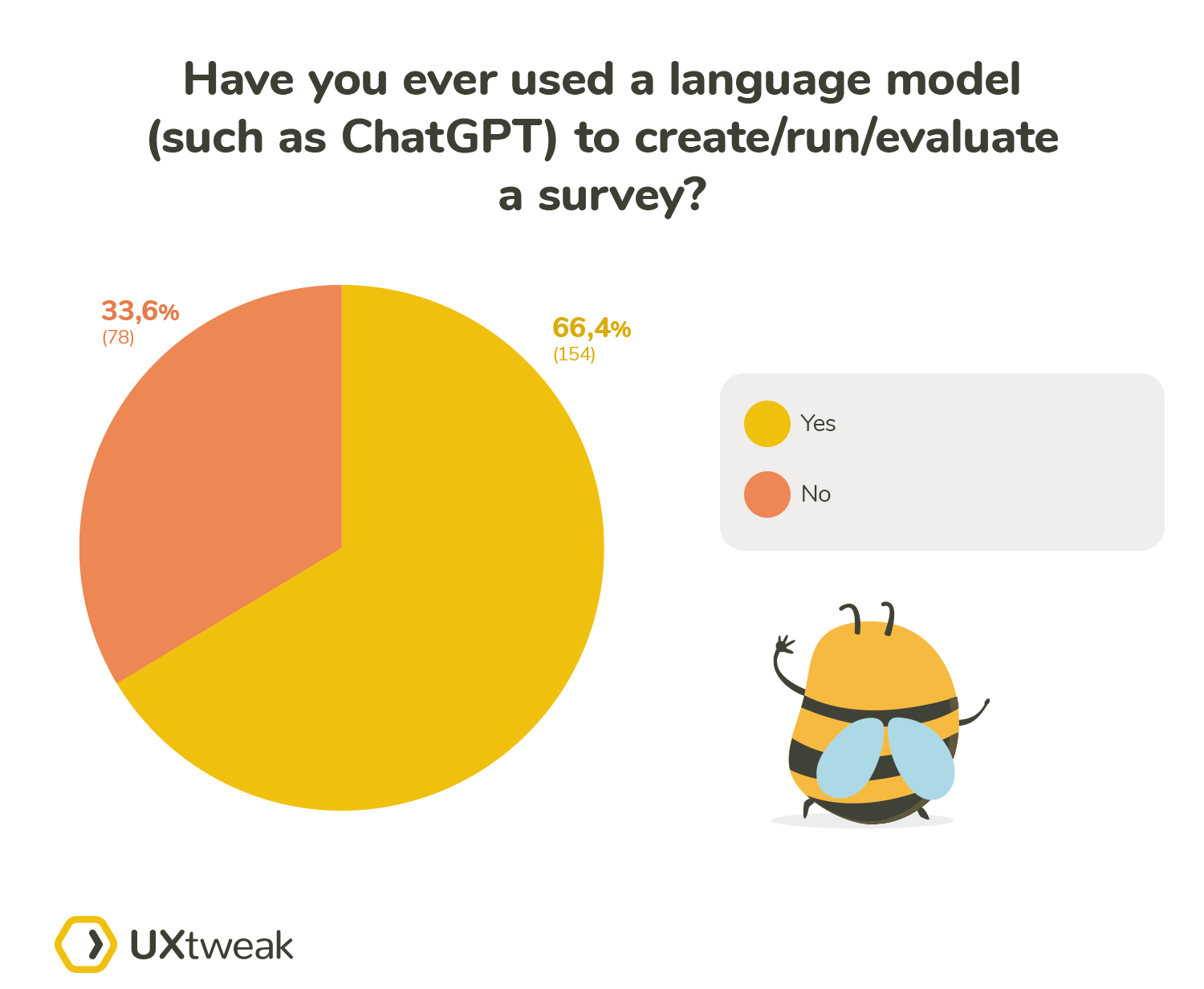

- 66.4% of all participants have used AI to create, run, or evaluate a survey.

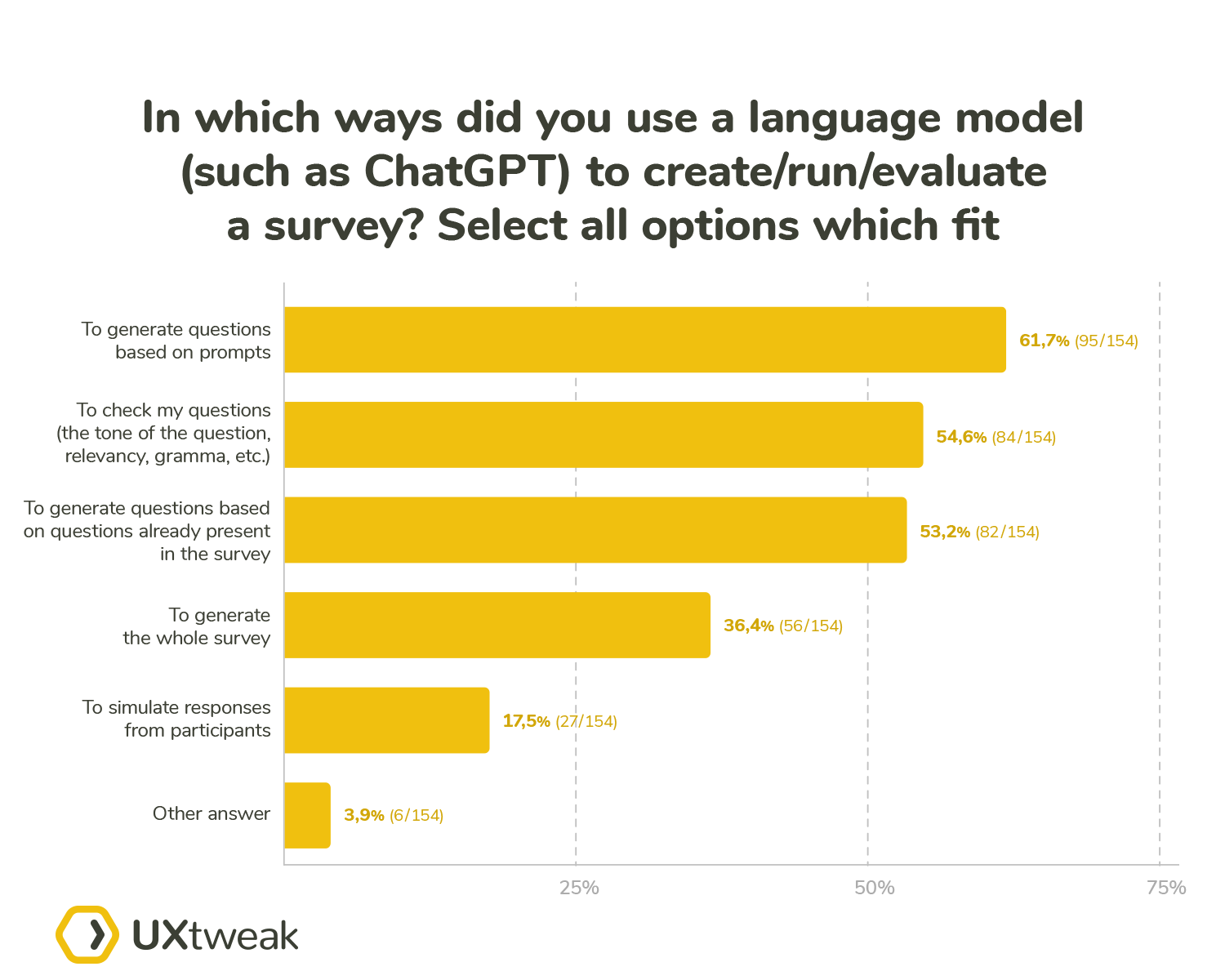

- Of those 61.7% generated questions based on prompts

- Only 17.5% used GPT-like models to generate answers for their surveys

- When asked about the use of AI based participants in specific study types, the highest number of participants could imagine using AI-generated responses / AI participants for information architecture studies (51.72%%) closely followed by surveys (46.98%).

💛 Satisfaction & Value:

- Among those who used AI for survey-related tasks, the average satisfaction score was 5.15 / 7. Encouragingly, no one expressed extreme dissatisfaction.

- Over half of the respondents have encountered situations where AI’s application in a UX process added little to no value.

💼 Job Security Perspective:

- Overall respondents have a neutral stance

- When compared between positions UX Researchers show the smallest amount of concern about AI being a threat to their job security. On the contrary, the UX designers are the ones who show the highest level of concern.

- The more senior respondents are, the less they view AI as a threat.

The report was created and written by UX Researcher Marek Strba, and Marketing Lead Tadeas Adamjak with the support of Lead UX Researcher Peter Demcak.

And brought to you in partnership with Axure, Condens and DesignerUp.

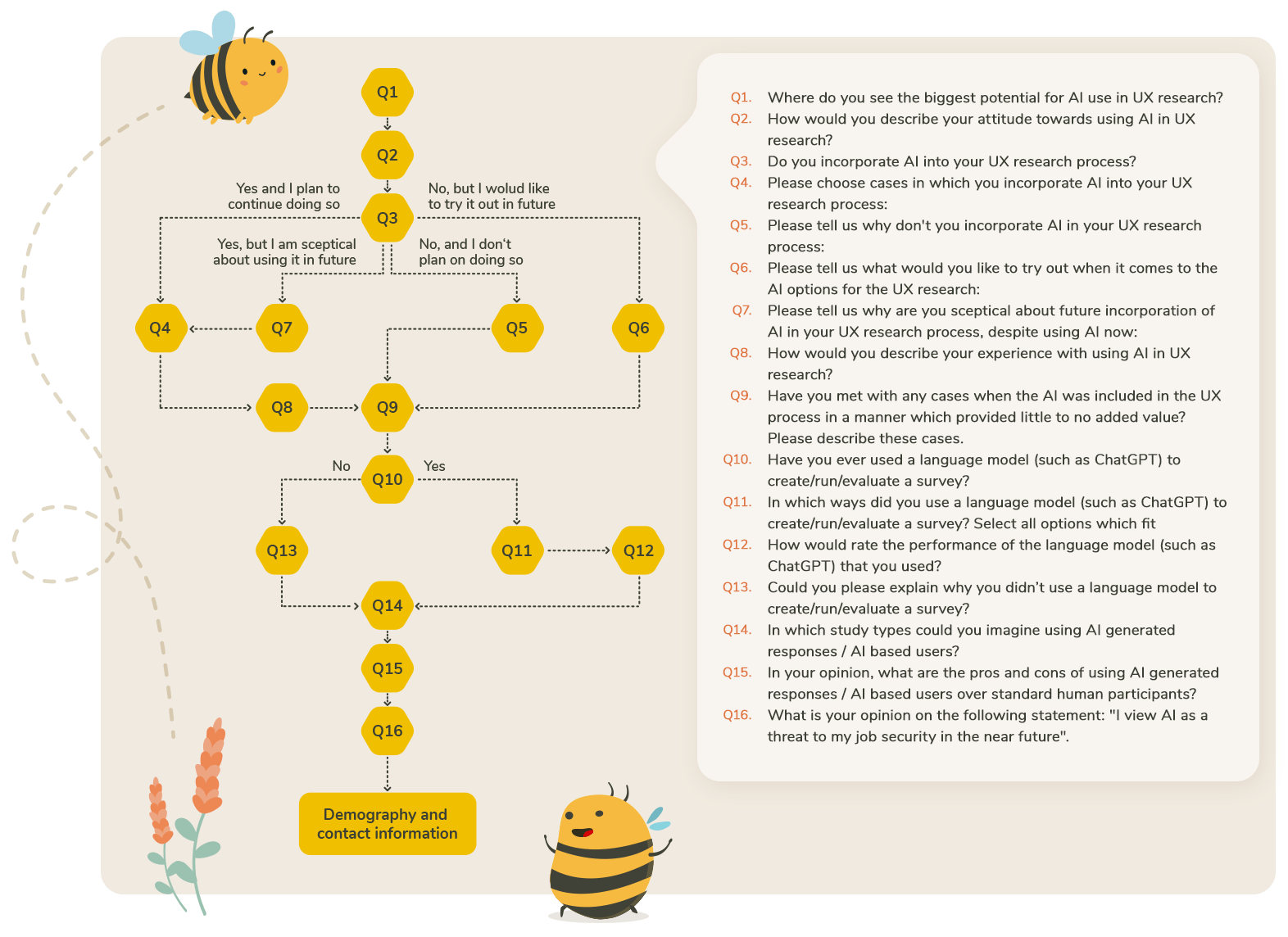

Survey structure

The core of the survey consisted of 16 questions in a structure shown in the diagram below:

What we asked about AI?

Question number 1 – “Where do you see the biggest potential for AI use in UX research?” was meant to be a bit of an ideation warm-up. We wanted the participants to look at AI in a broad scope and to review for themselves what they see as the most viable aspect of the UX research. Also, the participants got an option to provide a peek into their overall stance on AI + UX compatibility. We analyzed the sentiment of the answers provided here. For example, if a participant answered to this question with an answer similar to this “There are no positive sides to AI in UX” we would immediately know that they were a strong detractor and the level of trust they have for AI would most likely be very low.

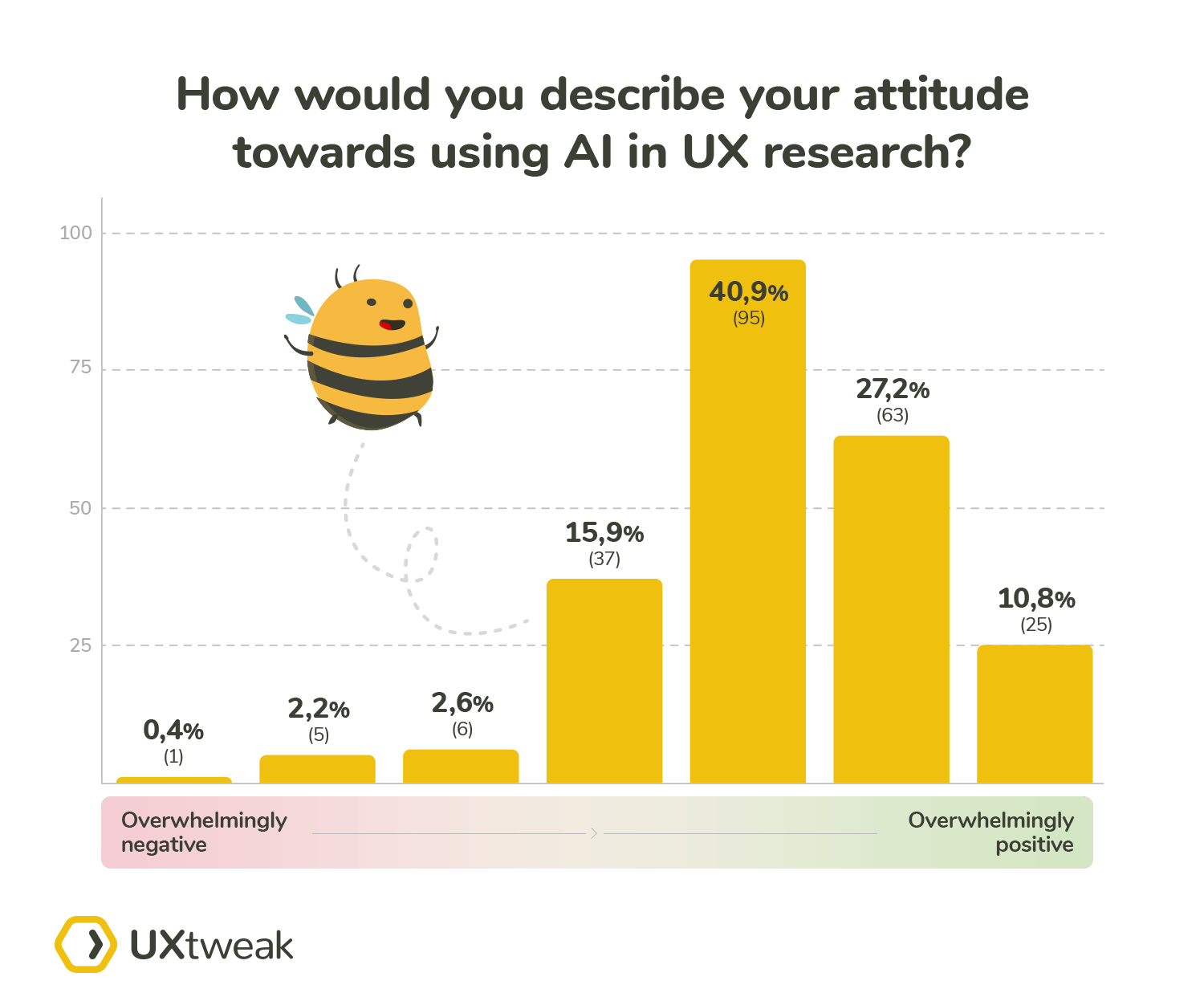

After Question number 1 asked the participants to describe their stance in their own words, we asked them to quantify their opinions with Question number 2 – “How would you describe your attitude towards using AI in UX research?” The details of this question were as follows:

- 7 point likert scale

- Low score label – Overwhelmingly negative

- High score label – Overwhelmingly positive

We implemented this survey with the use of skip logic. There were 2 dividing points in the questionnaire, which determined the branches into which were the participants sent.

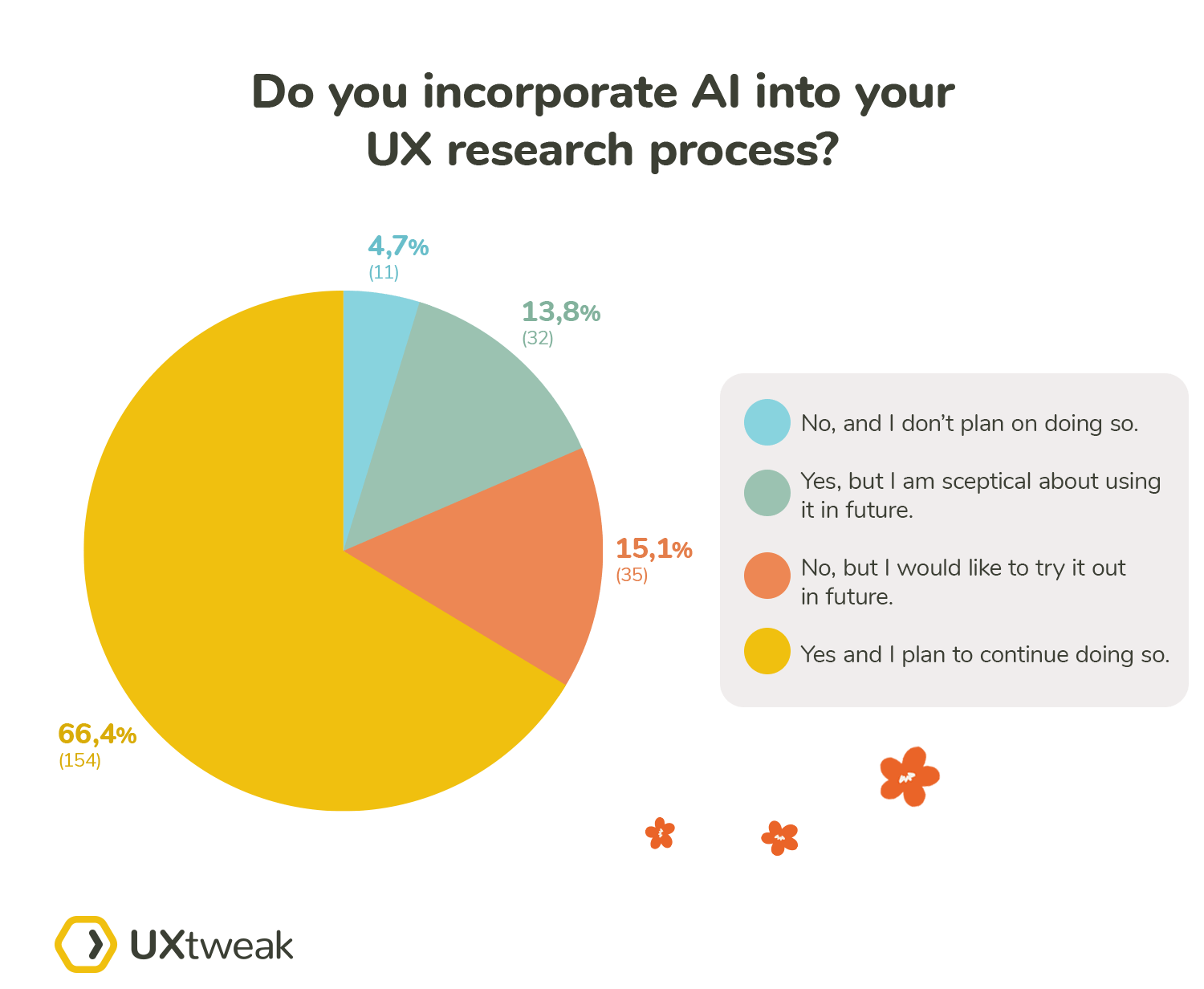

The first dividing point was question number 3 – “Do you incorporate AI into your UX research process?” With this question, we aimed to separate the participants into 4 subgroups:

- Group 1 – AI users with medium to a high level of trust – the option “Yes and I plan to continue doing so”

- Group 2 – AI users with a low level of trust – the option “Yes, but I am skeptical about using it in future”

- Group 3 – Participants who don’t use AI, but are considering it – option “No, but I would like to try it out in the future”

- Group 4 – Participants who don’t use AI and don’t plan on using it – option “No, and I don’t plan on doing so”

We asked the participants different follow-up questions, based on their experience. The AI users (Groups 1 and 2) were all asked two questions:

- Question number 4 – “Please choose cases in which you incorporate AI into your UX research process”. The aim of this question was to learn whether the opinion the participants had on their future continued use of AI was connected in any way to the scenarios in which the participants used the AI. We have provided the following options to choose from:

- Creating study tasks

- Creating questions or answer options

- Sentiment analysis

- AI generated responses / AI based users

- Generating results automatically (for example heat maps, saliency maps etc.)

- Other

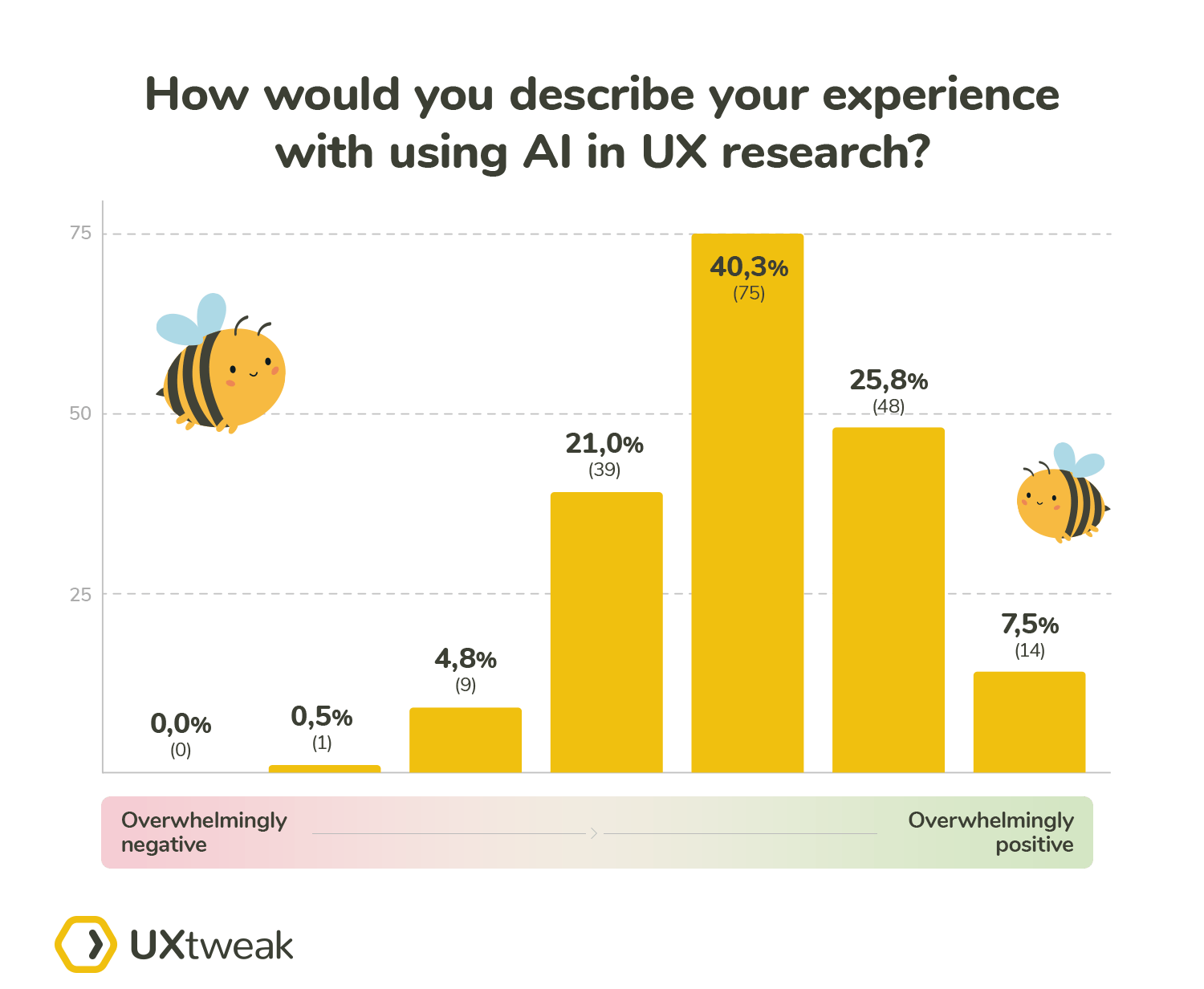

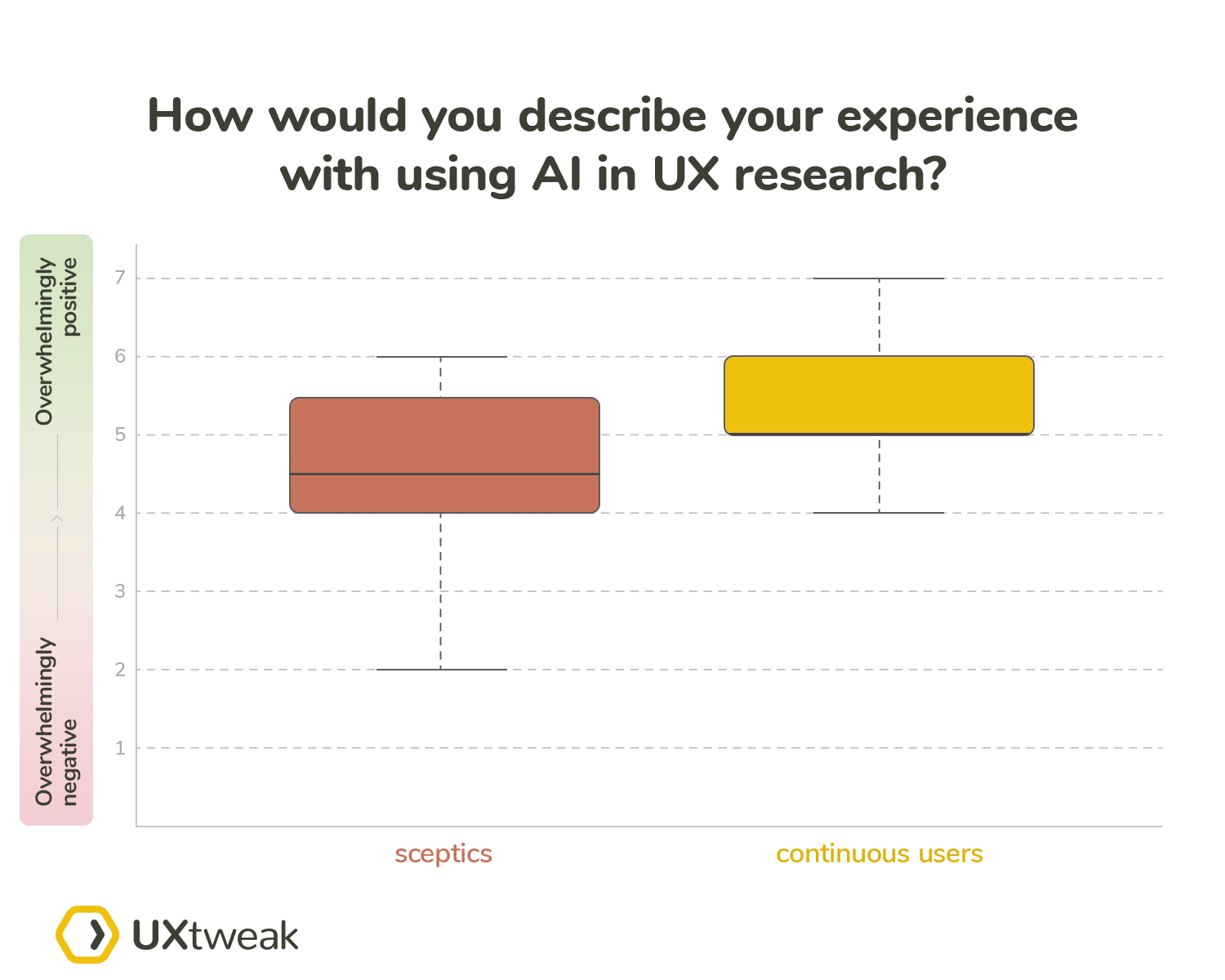

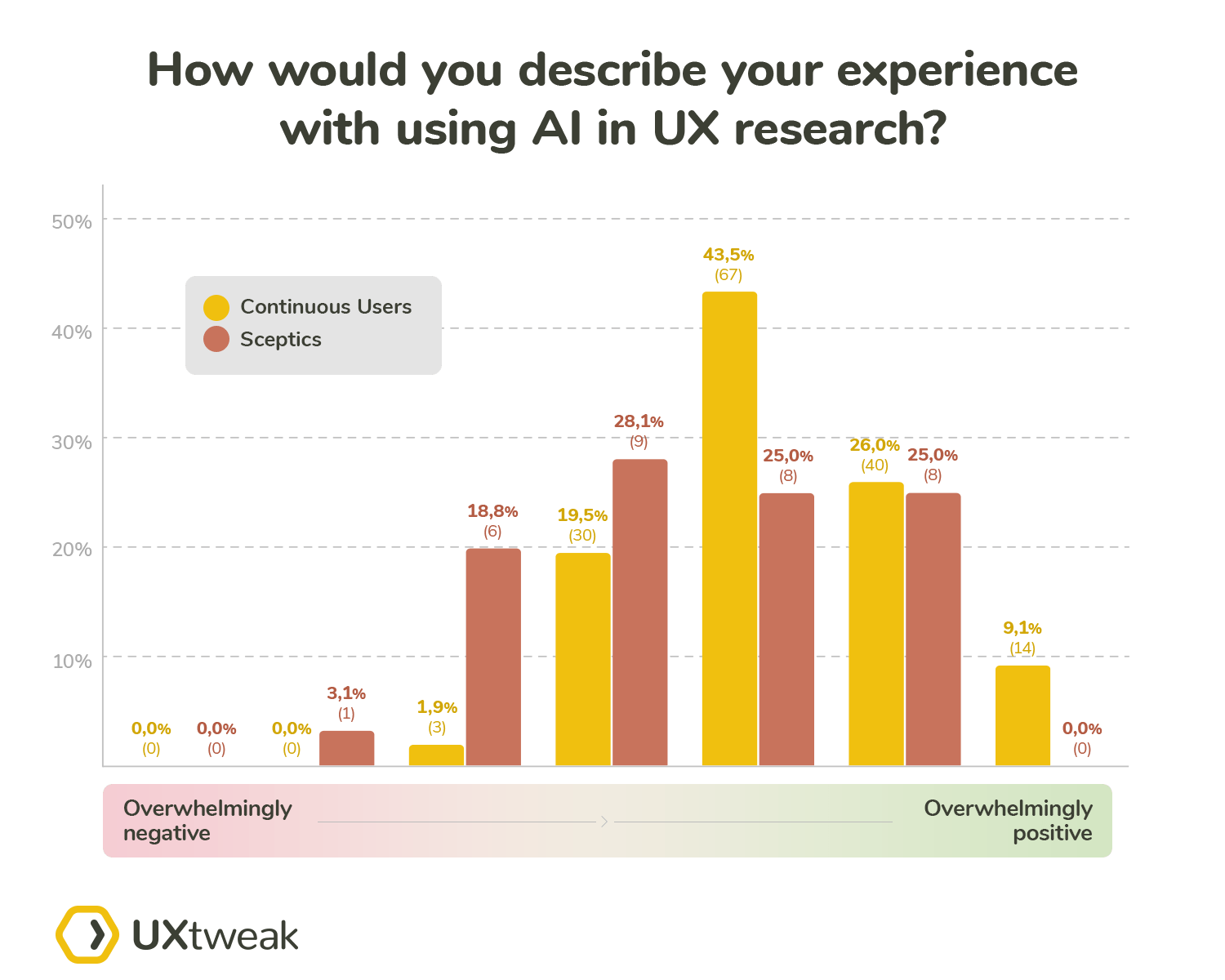

- Question number 8 – “How would you describe your experience with using AI in UX research?” We asked this question to learn whether the participants who are skeptical about their future continued use of the AI (Group 2) will have an overall more negative experience compared to the participants who aim to continue using AI (Group 1). The details of this question were as follows:

- 7 point likert scale

- Low score label – Overwhelmingly negative

- High score label – Overwhelmingly positive

Additionally, we asked the skeptics (Group 2) the following question: “Please tell us why you are skeptical about the future incorporation of AI in your UX research process, despite using AI now.” (Question number 7). With this question, we simply wanted to learn more about the reasoning behind their skepticism/lower level of trust toward AI.

We had unique follow-up questions for either of the remaining groups (the participants who aren’t using AI in their research).

The question for potential users (Group 3) was: “Please tell us what would you like to try out when it comes to the AI options for the UX research.” (Question number 6). The answers to this question would let us know what are the AI options that are the biggest lures of the current non-users.

To learn about the other side as well – the biggest showstoppers, we asked the final Group 4 (non-users without future plans of use) to answer the following question: “Please tell us why you don’t incorporate AI in your UX research process.” (Question number 5).

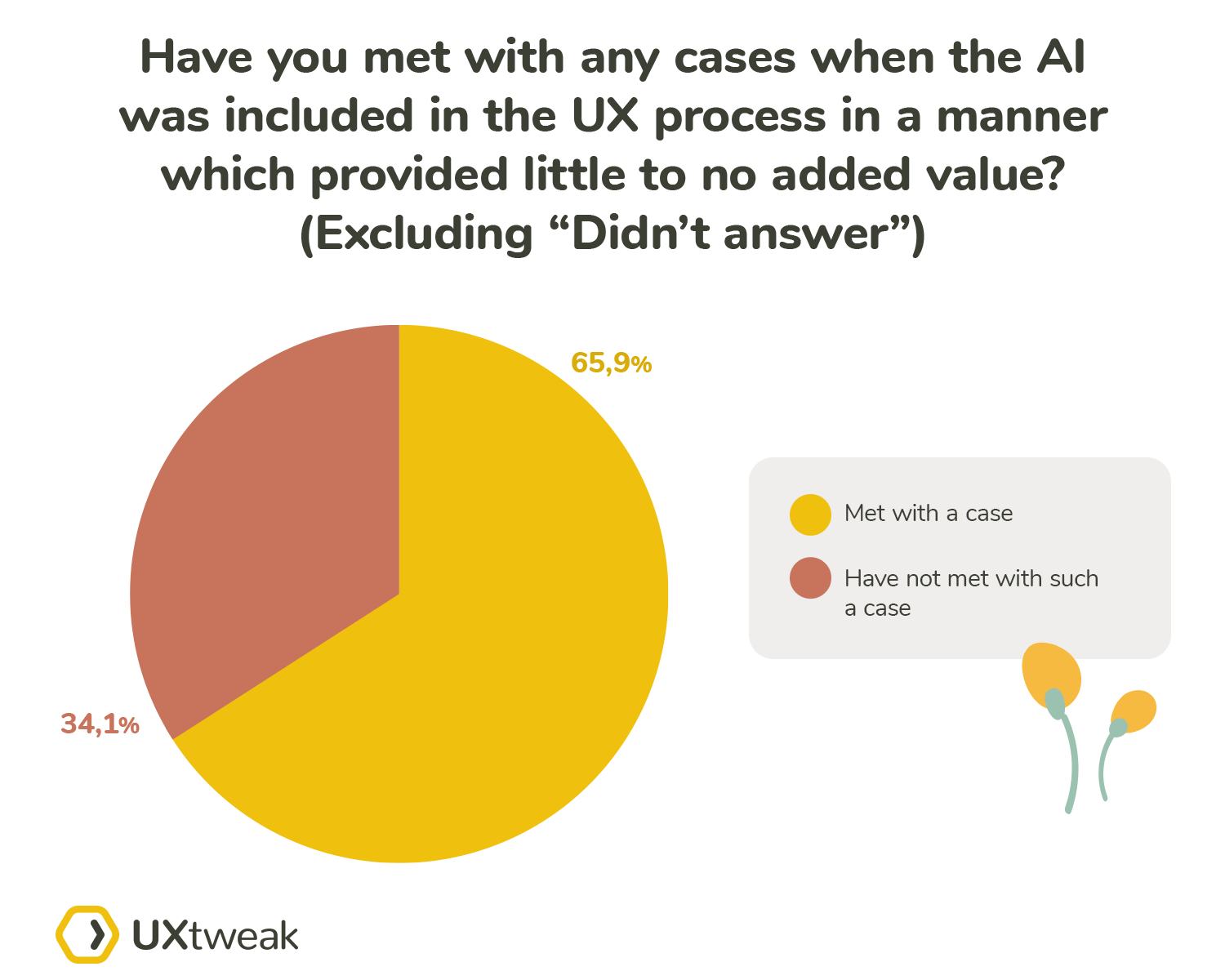

Question number 9 was common for all participants – “Have you met with any cases when the AI was included in the UX process in a manner which provided little to no added value? Please describe these cases.” By asking this question we wanted to learn more about the abuse of the position of the AI as a buzzword. AI has been added to many systems without the need for it just to check the “AI based solution” checkbox. With this question, we ended the first block of the questionnaire aimed at the general opinions in regards to the AI UX compatibility.

One of the most polarizing points in the whole AI + UX discourse is the ever-increasing wide use of language models, such as ChatGPT. The fact that these tools are easy to access and seemingly easy to use has in our opinion led to diluting the quality of UX research to some extent.

If handled properly, these tools can lead to higher efficiency, however, mishandling them can break the whole research for you. The second block of our questionnaire focused on this subject and started with our second and also last dividing point of the questionnaire – Question number 10 – “Have you ever used a language model (such as ChatGPT) to create/run/evaluate a survey?” This question simply split the participants into 2 groups: users and non-users. Each group then answered different follow-up questions.

The users were first asked: “In which ways did you use a language model (such as ChatGPT) to create/run/evaluate a survey? Select all options which fit” (Question number 11). The following options were provided:

- To check my questions (the tone of the question, relevancy, grammar etc.)

- To generate questions based on prompts

- To generate questions based on questions already present in the survey

- To generate the whole survey

- To simulate responses from participants

- Other

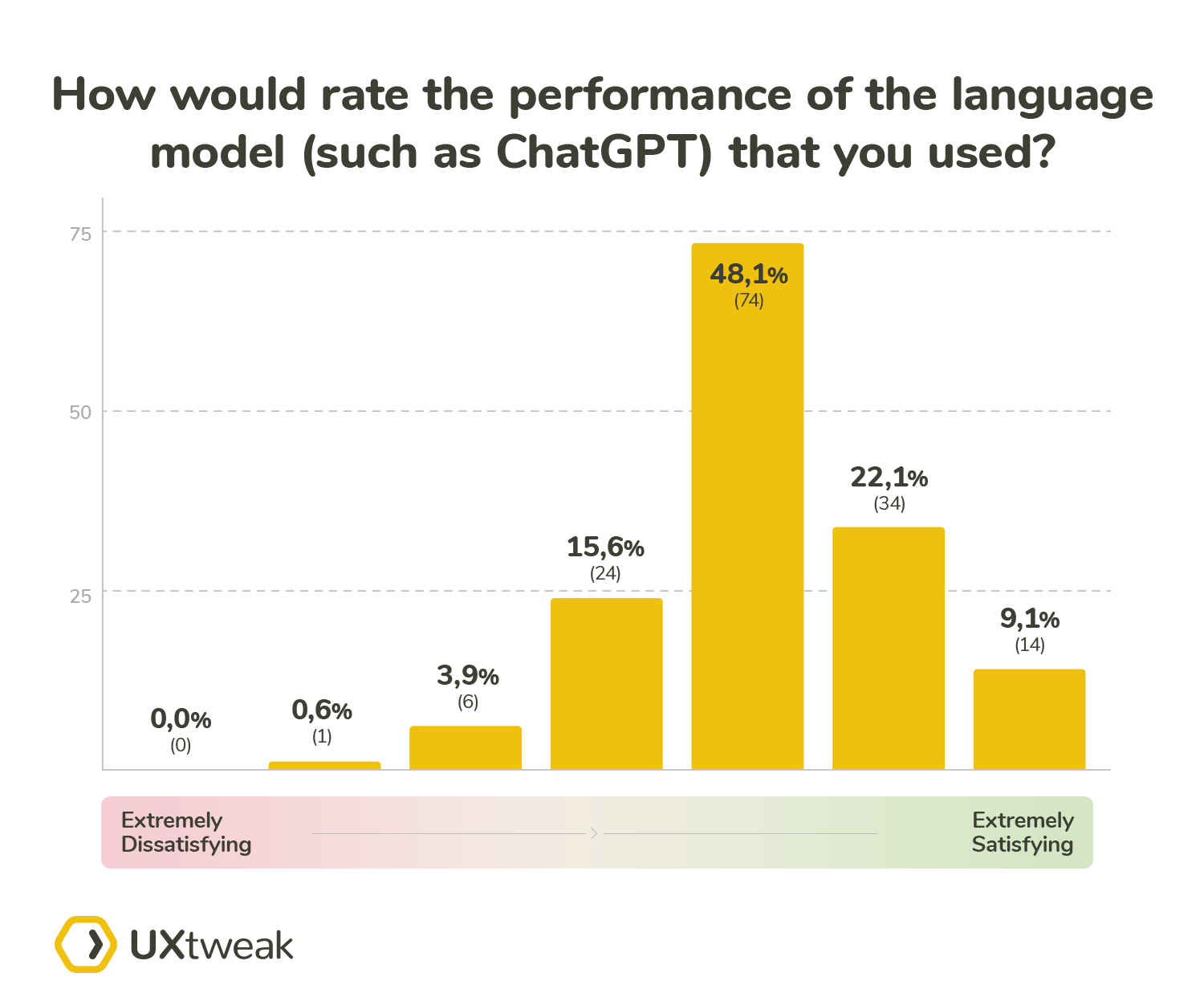

After the participants chose from the provided options, we asked them about their satisfaction with the performance: “How would you rate the performance of the language model (such as ChatGPT) that you used?” (Question number 12). The details of this question were as follows:

- 7 point likert scale

- Low score label – Extremely Dissatisfying

- High score label – Extremely Satisfying

The aim of these two questions was to learn whether there were any differences between levels of satisfaction with the performance of the language models based on the scenario in which they were used.

With the non-users we simply asked them to shed some light on their decision: “Could you please explain why you didn’t use a language model to create/run/evaluate a survey?” (Question number 13).

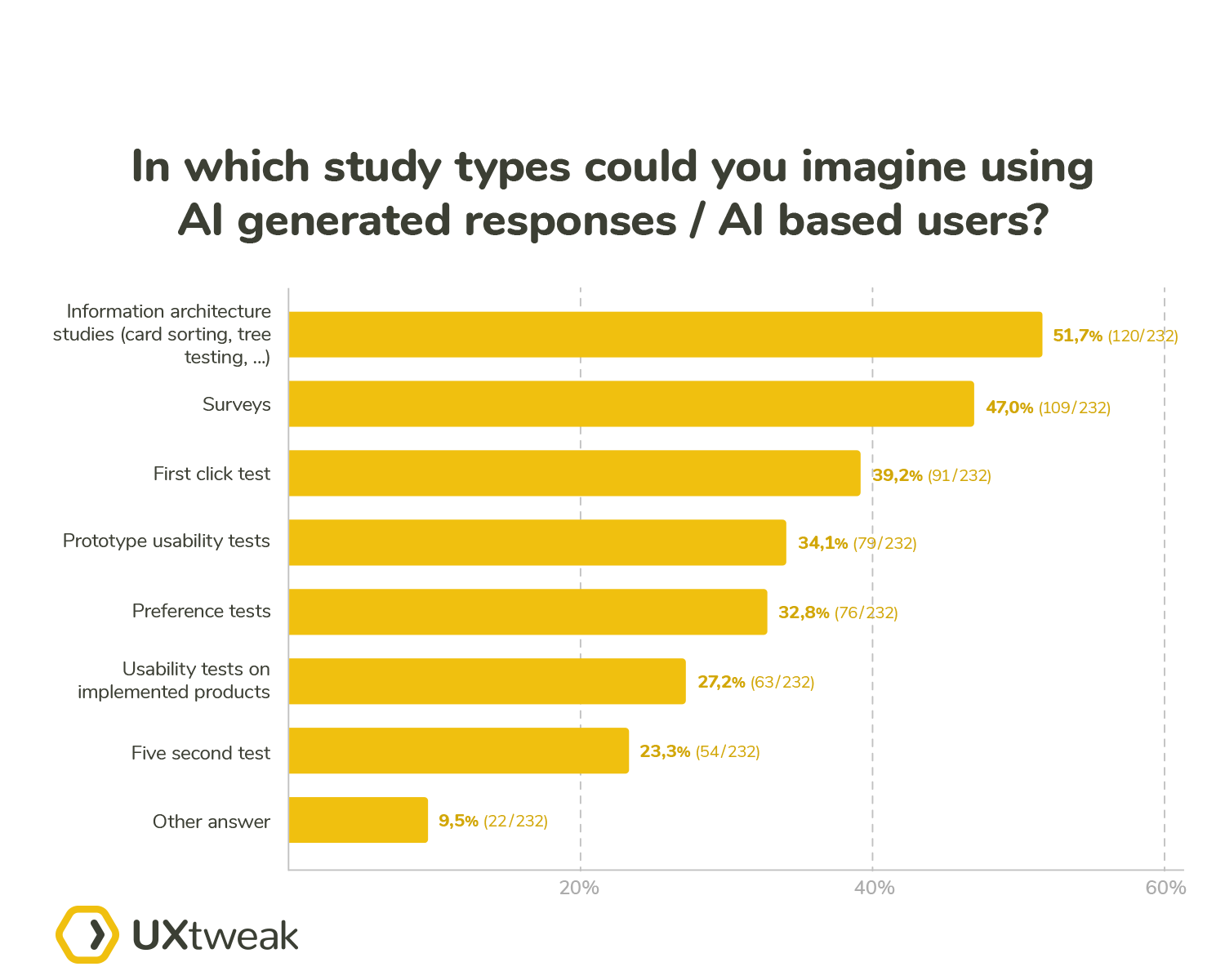

Another polarizing topic, which is closely related to language models is the use of AI based users and also partially the generation of AI based results (for example saliency map generators aren’t based on language models). First, we wanted them to tell us which scenarios, if any, are best suited for these AI based responses – “In which study types could you imagine using AI generated responses / AI based users?” (Question number 14). The available options included:

- Information architecture studies (card sorting, tree testing…)

- Surveys

- Preference test

- First click test

- Five second test

- Prototype usability tests

- Usability tests on implemented products

- Other

To complement their chosen options, we asked participants about their thoughts in a broader scope: “In your opinion, what are the pros and cons of using AI generated responses / AI based users over standard human participants?” (Question number 15). With this question we ended the second block of our questionnaire, which was specifically focused on the language models.

The final question addressed the ever-increasing use of AI not only in the UX field but in general and the fact that it also arrived with the increasing number of people who no longer feel their jobs are secure. This topic is often overlooked, despite job security related anxiety being a real problem, which can have a significant detrimental effect on the quality of life for any person.

We have always been and always will be strong advocates of mental health, therefore we didn’t think twice about addressing this topic in our questionnaire. We asked all our participants the following question: “What is your opinion on the following statement: “I view AI as a threat to my job security in the near future””. (Question number 16) The details of this question were as follows:

- 5 point Likert scale

- Low score label – Strongly disagree

- High score label – Strongly agree

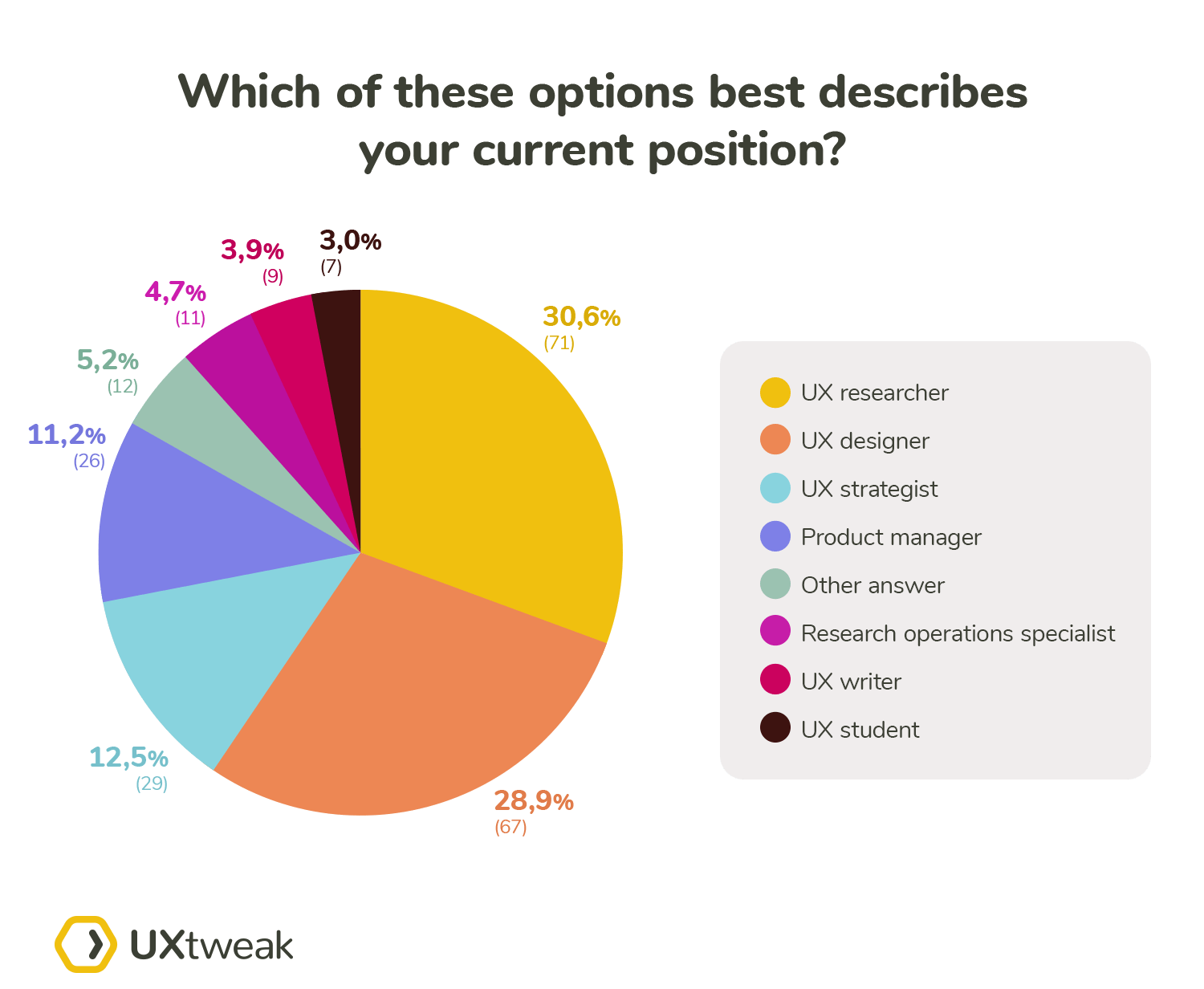

Demographics

We asked all our participants to provide some context on their background. We only asked about the details, which we believed could explicitly influence the answers provided to the questions in the core questionnaire. There were 4 demographic questions that we included in the questionnaire, all of which were single choice:

- Question 17- Which of these options best describes your current position?

- UX researcher

- UX designer

- UX strategist

- Research operations specialist

- Product manager

- UX writer

- UX student

- Other

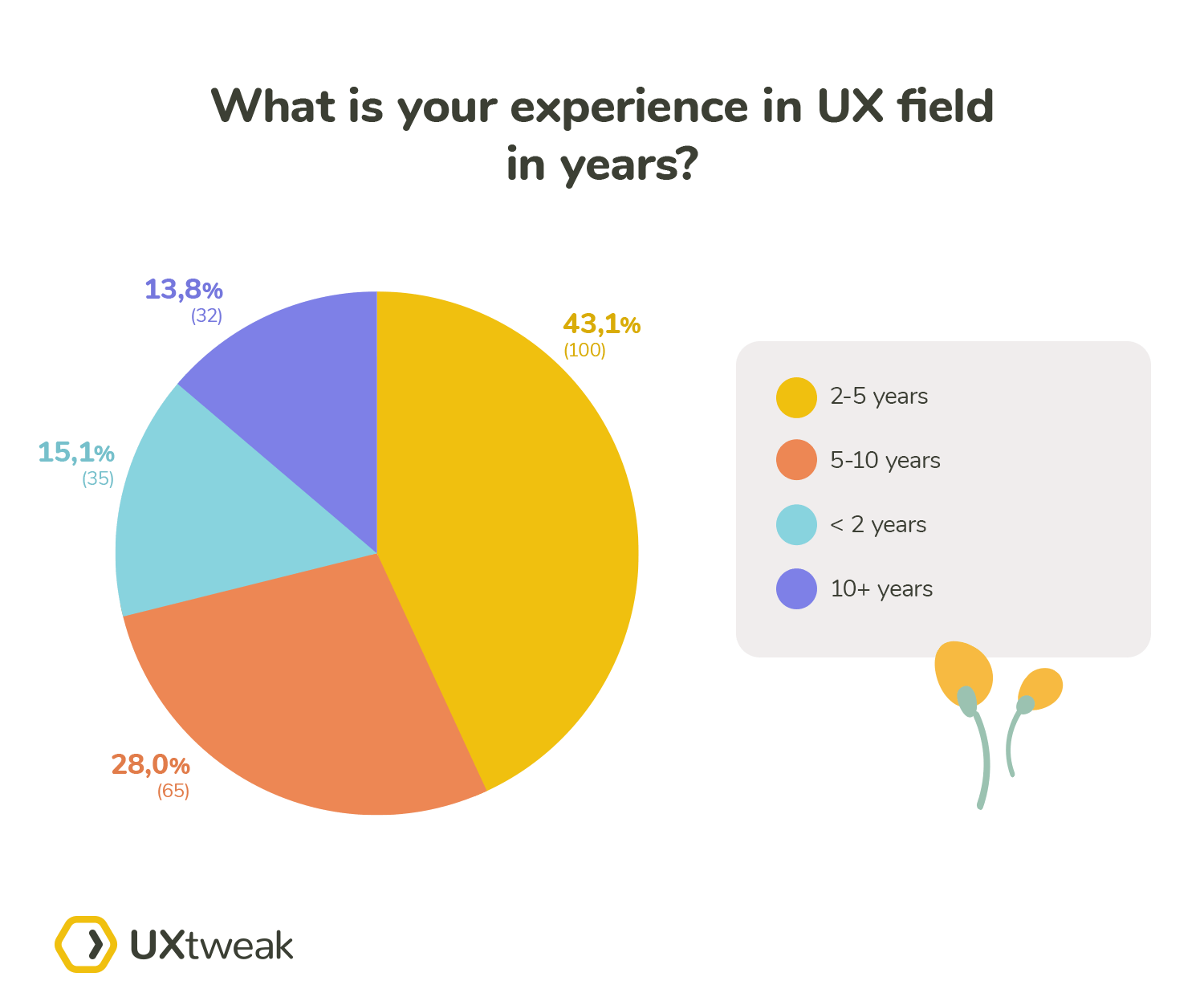

- Question 18 – What is your experience in the UX field in years?

- <2 years

- 2-5 years

- 5-10 years

- 10+ years

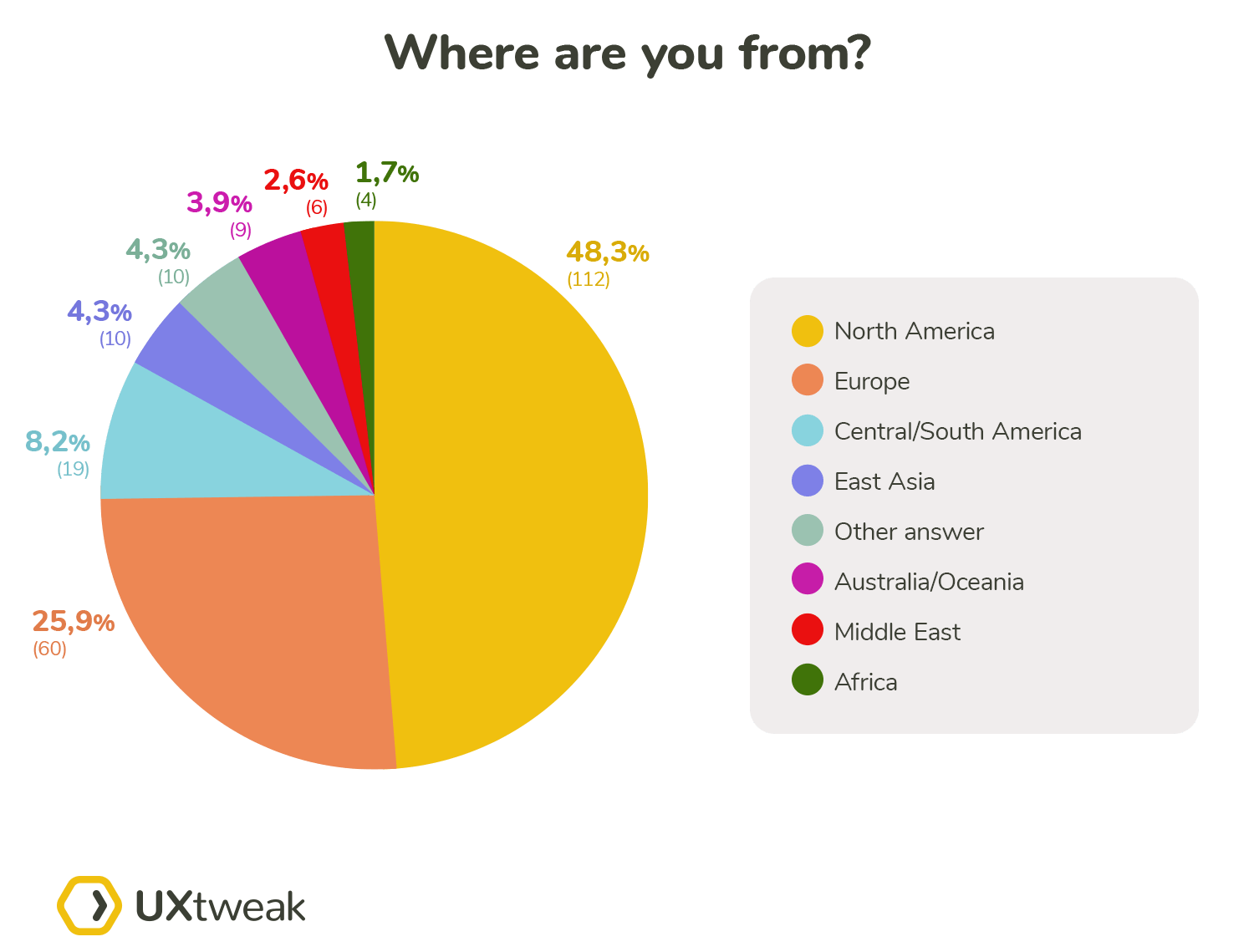

- Question 19 – Where are you from?

- North America

- Central/South America

- Europe

- East Asia

- Middle East

- Africa

- Australia/Oceania

- Other

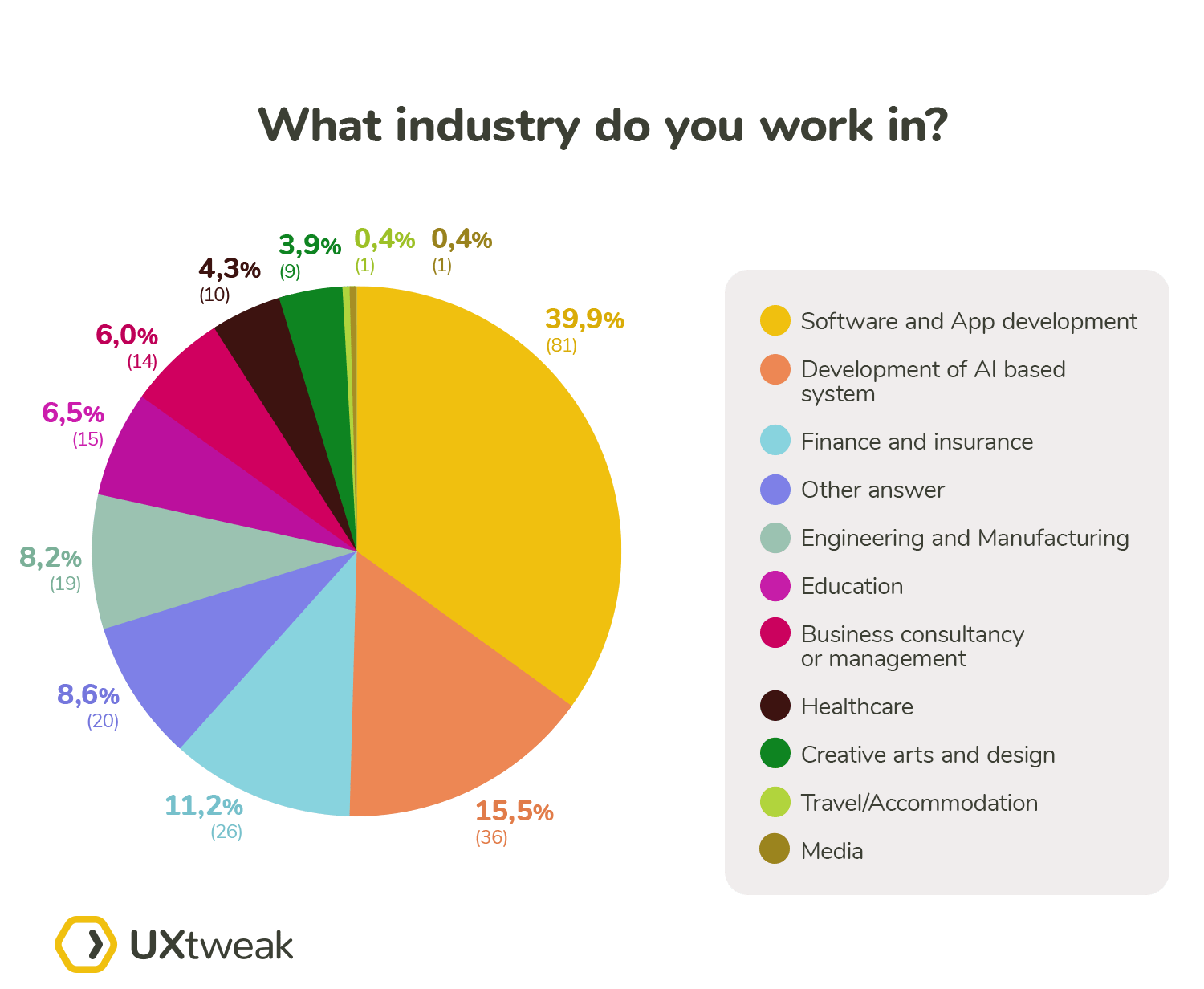

- Question 20 – What industry do you work in?

- Development of AI based systems

- Software and App development

- Finance and insurance

- Healthcare

- Travel/Accommodation

- Education

- Engineering and Manufacturing

- Media

- Creative arts and design

- Business consultancy or management

- Other

Demographic and participation details

After cleaning out the data we were left with 232 answers. The largest group of our respondents were UX researchers (30.6%), with UX designers following closely afterward (28.9%).

Most represented were people with 2-5 years of experience (43.1%). A notable 19.7% of UX researchers and 26.9% of UX designers had 5-10 years of experience. 18.3% of UX researchers and 6% of UX designers had more than 10 years of experience.

Our survey had a global representation. 48.3% of respondents were from North America making up the largest group in our survey, while Europeans made up a bit more than a quarter (25.9%).

The two most prevalent sectors the respondents work in are Software and App development (39.9%) and Development of AI based systems (15.1%).

Survey results

We split the results into three sections, matching the three main blocks of our questionnaire:

- General view of AI and UX compatibility

- The use of language models and AI based responses

- AI and job security

Concurrently to the survey we also gathered qualitative responses from renowned UX experts. We included some of their key points in the sections below.

General view of AI and UX compatibility

This section of the questionnaire consisted of the first 8 questions, however, some of them were mutually exclusive, based on the answers to the previous questions.

The potential of AI in UX research

The very first thing we wanted our participants to address was their general view in regards to how AI can help in UX research. To let them express their high level opinions we asked them:

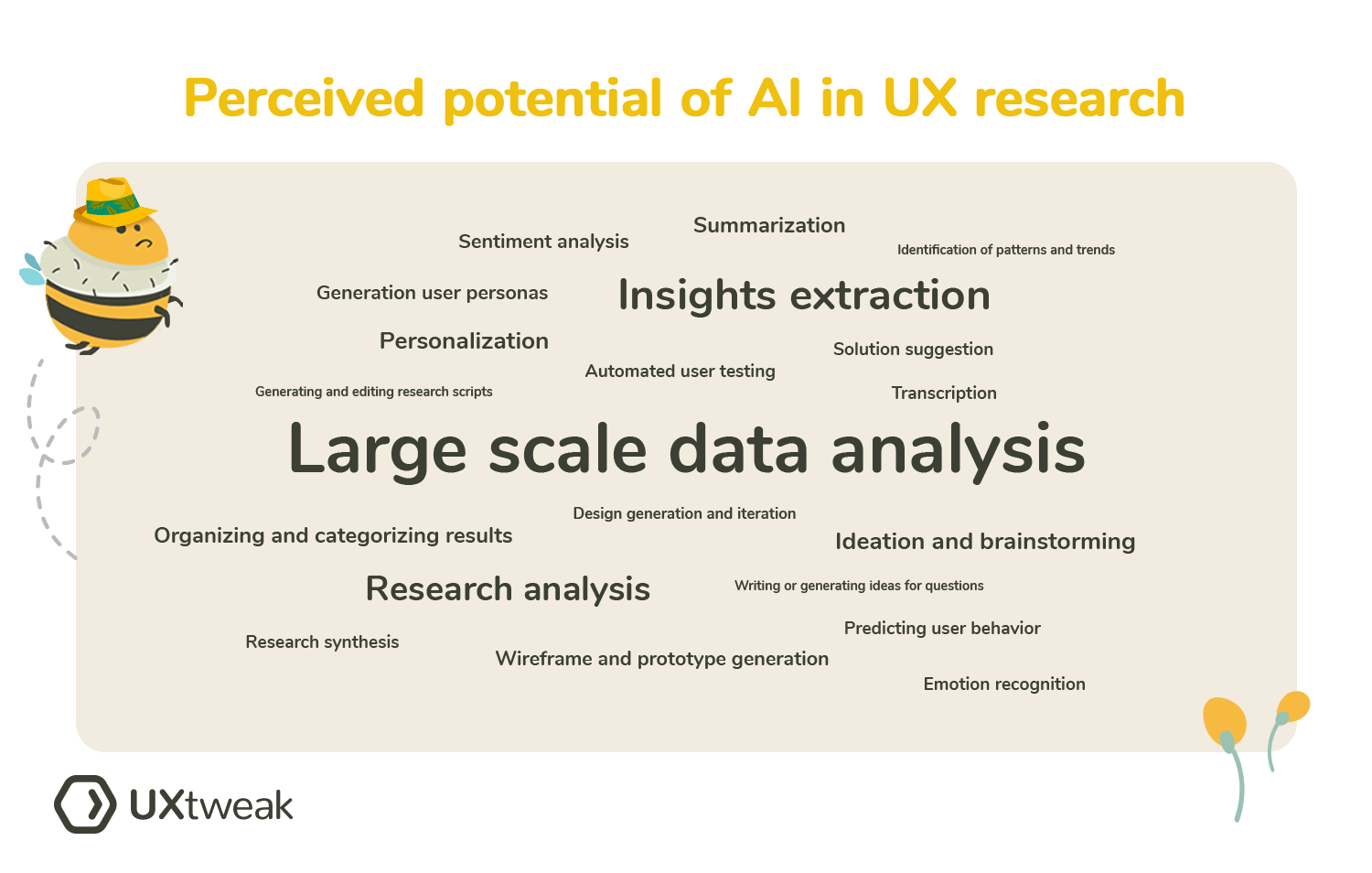

Where do you see the biggest potential for AI use in UX research?

The 5 most common answers related to:

- Large scale data analysis

- Insights extraction

- Research analysis

- Personalization

- Ideation and brainstorming

You can see all the repeated answers visualized in the following word cloud:

Some of the answers caught our eyes:

“Helping with compilation, transcription, insights and reports. AI is helpful to discover patterns, but I think that analysis and interviews are “human things”. Because it needs the balance between sensibility and reason, and most of all: curiosity.”

Respondent: UX Designer, Brazil, 2-5 Years of Experience, works in Creative Arts and Design

Curiosity, mentioned by our participant, is one of the aspects that is natural to humans, especially to researchers and creative people, however this characteristic cannot be achieved or simulated by AI in any way.

“AI can help a lot to achieve different types of tasks: research competitors, find inspiration or similar products, get advice to find target groups for usability testing, help write scenarios, etc. I don’t see any ability to automate any of these tasks but at least for now spend less time on manual or repetitive work.”

Respondent: UX Researcher, Czech Republic, 2-5 Years of Experience, works in Software and App development

AI can be used as a tool, to augment your research process, but not to complete it for you. As any other tool, it should be handled with care.

“Research ops such as scheduling participants, writing or generating ideas for interview questions, analysing responses and collating common themes, suggestions for most appropriate research method when given a UR problem”

Respondent: UX Designer, England, 2-5 Years of Experience, works in Finance and insurance

People sometimes forget how much paperwork, organization, bureaucracy and other similar tasks are an integral part of being a UX ReOps Lead or a UX strategist.

“AI is a really useful resource for research, if you are lack of users you could ask it to impersonate different users an see their opinions, you could do a benchmark of your competitors, ask the AI for any insights inside an infinite report, the possibilities are many”

Respondent: currently not working, Spain, <2 Years of Experience, worked in Healthcare

And

“AI is very good at assuming a persona. You can practically find out the way certain Persona thinks by assigning that personal to the AI. Can be primed to make sure that the answers are modulated as per the Persona in every single conversation. AI therefore becomes a nice tool to validate your ideas before even constructing them and testing them with real users.”

Respondent: Product manager, Singapore, 10+ Years of Experience, works in Software and App development

These two quotes stand for the use of AI as a respondent to some manner. We don’t agree with the first quote, which paints the AI responses as an alternative to testing with real users. Compared to that, the second quote suggests doing a dry run with an AI respondent and then validating your findings with real users. This is a more realistic approach in our opinion.

The stark difference between these two opinions can also be attributed to the significant difference in the seniority of these two participants. The junior participant is eager to use AI in a more radical manner, compared to the senior expert, who prefers to handle AI with care.

Additionally one of the questions we sent out to the UX experts in a separate survey was: “What would be the one aspect of UX research that you think is best compatible with using AI and why?”

The answers mostly aligned with the most frequent topics from our survey research. For example the words of Dr Gyles Morrison MBBS MSc:

Stéphanie Walter sees the biggest potential in the help with ReOps, assistant work, initial data analysis and as a ideation partner. She however cautions against blindly believing the outputs which the AI provides. To quote her exactly:

To sum it up, the community believes that the main aspects in which the AI can prove useful for the UX research process include:

- Initial and supervised data analysis, pattern recognition

- Help in creating personas, potential use includes dry runs, later validated by answers from real users

- Automation of mundane tasks and help with organization of the research

- Ideation partner for copywriting and to some extent wireframes and prototypes

- Transcription, emotions and sentiment analysis

However, a key takeaway is: Always question the results provided by the AI. To quote another of the participants:

“Artificial intelligence systems have great potential, but their performance also depends on their data.”

Respondent: UX Researcher, United States, 5-10 Years of Experience, works in Engineering and Manufacturing

Since the AI models are dependent on data used to train them, they also inherit all of the biases present in the dataset.

What is the attitude of the UX community towards AI?

As can be seen in the results below, most of the participants seem to be excited about the opportunities AI brings. Most of the participants (183) see AI in a positive light. 25 participants even chose an overwhelmingly positive stance. Compared to that the participants, who provided any sort of a negative stance (values 1-3 on the scale) were in a considerable minority with a total of only 12 responses. The average score was 5.19 on 232 responses. Based on these results we could conclude that UXers are open to new technologies, as long as they see an added benefit in their use.

Additionally, we tried separating the responses by the seniority level or by the specific professions.

None of these groups deviated significantly from each other, nor from the overall result.

How many participants incorporate AI in their UX research?

To better understand answers provided later down the road, we asked our participants whether they are using AI in their own research or if the opinions they provide are from the outside looking in.

95.3% of the participants either already included AI in their UX research at some point or are open to trying it out down the road.

Opinions of people who use AI in their research

The following section includes answers only from the participants, who have already used AI in their UX research.

In which cases is the AI implemented in UX research?

With the answers from our participants, we can conclude that most of the users would use AI to create questions or answer options. Little more than 60% of all AI using participants chose this option. We believed this would be the most picked option simply because it is the easiest to check and can be considered mundane work to some extent.

We appreciate that creating questions and answer options is in some cases difficult and a change of one word can have a significant impact on the resulting survey. However, there are also many questions (such as demography) where a touched up result of a good prompt is more than sufficient. Also, this is one of the scenarios where AI can be easily used as an ideation sparring partner in times of creative drought.

Compared to the questions the runner up in task creation was picked less with a little under 50%. This was most likely due to task texts usually being more complex than the standard survey questions. The biggest surprise among the results for us was that the sentiment analysis dropped all the way to the last place.

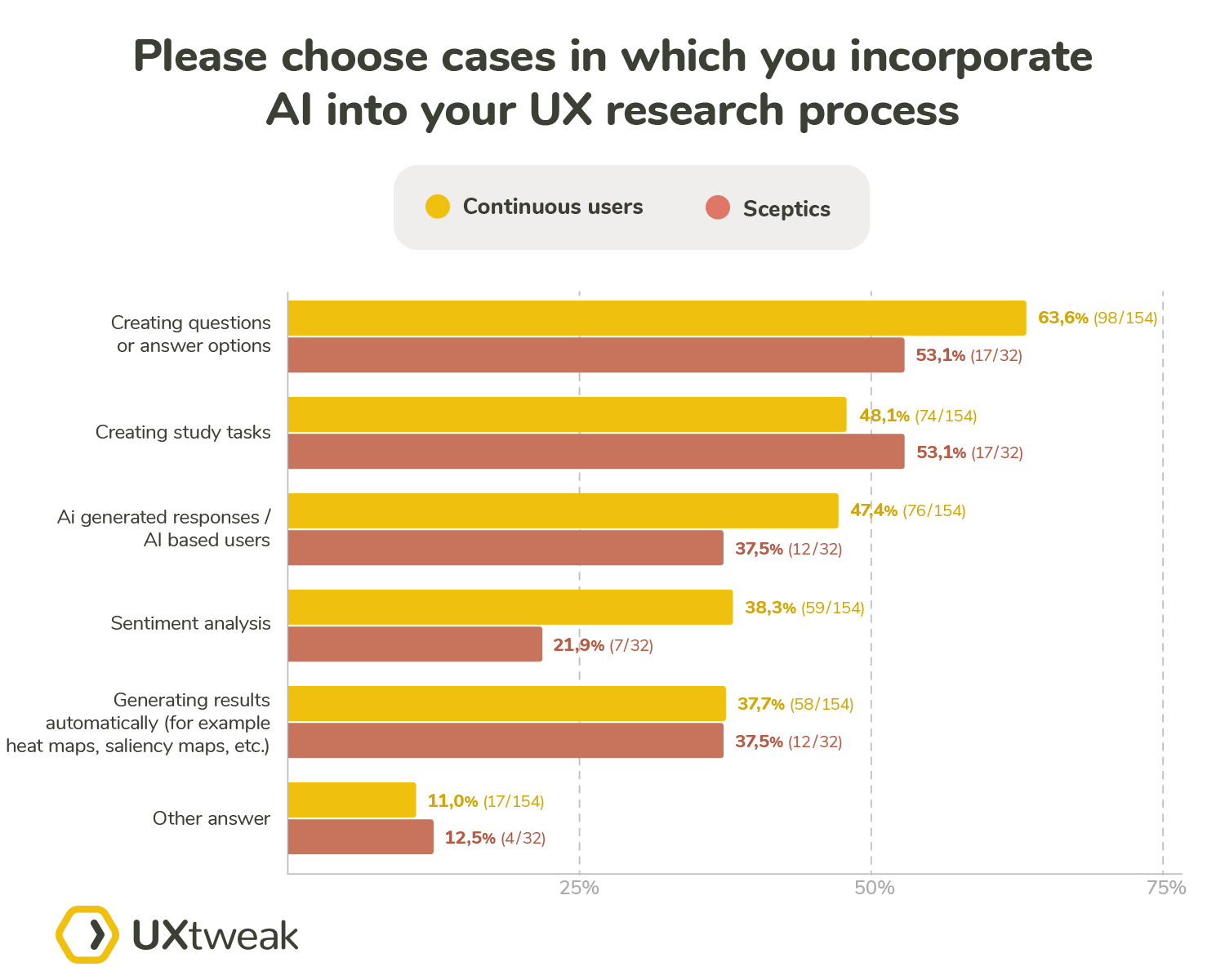

We split results by the answer to Q3 (Do you incorporate AI into your UX research process?) into two groups:

- Skeptics – “Yes, but I am skeptical about using it in future”

- Continuous users – “Yes and I plan to continue doing so”

The split had no effect on the AI use in generating automatic results, where both groups used it in around 37% cases. The most significant differences in use were with the question creation, use of AI based users and the use of sentiment analysis. For these three cases, the continuous users used them significantly more than the skeptics with differences of 10.5, 9.9 and 16.4% respectively. The only case in which the skeptics used AI more frequently than the continuous user was the creation of study tasks. From these results, we cannot determine a specific scenario of AI use, which would pose as a deterrent to the skeptics. The more specific views from the skeptics were gathered in Q7.

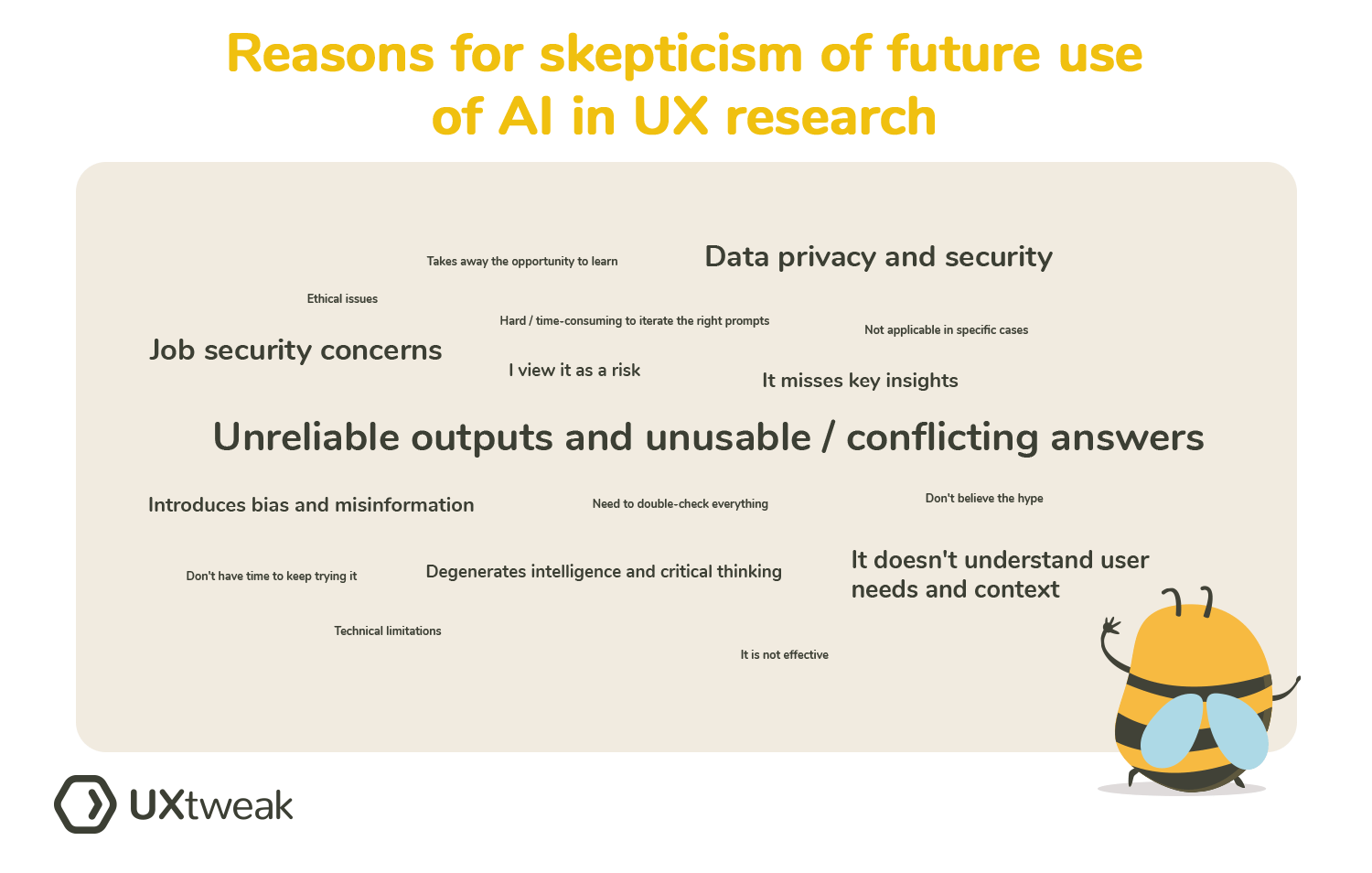

Reasons behind AI skepticism

Here are the top 5 most repeated reasons respondents mentioned when asked about why are they skeptical about the incorporation of AI in the UX research process are:

- Unreliable, outputs and unusable even conflicting answers

- Job security concerns

- Data privacy and security

- It doesn’t understand user needs and context

- It misses key insights

Again, we prepared a word cloud for the most repeated reasons.

Below we listed some of the answers, which addressed a point or two we found especially interesting:

“The extensive use of artificial intelligence brings a lot of convenience, but on the other hand, it will also bring unemployment to people, make people’s intelligence degenerate and so on.”

Respondent: UX researcher, United States, 2-5 Years of Experience, works in Engineering and Manufacturing

Our minds need practice to stay sharp. Degeneration of intelligence can be one of the implicit factors that comes with leaving as much work as possible to the machines.

“AI is not the user and is only useful as a decision support system. Using it for ideas to do simple research will introduce 3rd party bias into the mix that we can’t control.”

Respondent: UX strategist, United States, 5-10 Years of Experience, works in Business consultant/Media

AI is not a user and we cannot compensate for the bias it brings with itself.

“I feel AI is a double edged sword. It can be very beneficial in helping you brainstorm and generate ideas, but at the same time, you still need to conduct your own research outside of AI to make sure that the things it told you are in fact true and relevant to your project. AI is only as good as your input (questions and prompts you use to generate results), the timeframe the data was pulled from that the AI uses, and the body of knowledge that the AI is pulling from. There is a rife opportunity for biases and misinformation. You just need to make sure and double check all the information the AI generates for you.”

Respondent: UX student, United States, <2 Years of Experience, works in Finance and insurance

Some of the answers also included positive points as well, such as help that AI can provide during the ideation process. However, it is imperative to not blindly trust the AI results.

“I guess I would like to master the UX research process by myself then I try to get the help of ai.”

Respondent: UX designer, Morocco, <2 Years of Experience, works in Development of AI based systems

AI inclusion might have an impact on the learning process and training of a researcher and might lead to people not adopting key UX related knowledge since they can be led to believe this knowledge is not needed.

Experience with using AI in UX research

The average score for this question stood at 5.09 based on 186 responses (only participants who selected that they are using AI in their research saw this question). Based on this score we can claim that the AI users are rather satisfied with the experience they had while using AI.

We analyzed the results further by splitting the respondents by their seniority and their positions.

These splits didn’t show any significant differences. However, after we split the participants by their answer to Q3 to avid users and skeptics we got a more graspable difference. The skeptics averaged a score of 4.5 on 32 answers, while the users, who plan to continue using AI in the future, averaged a score of 5.21 on 154 answers.

Based on this we can confirm that the skeptics were less satisfied with the performance of AI. For the specific reasons why they identified themselves as skeptics, refer to the results of: Reasons behind AI skepticism. However, it is important to note that we were able to gather only 32 “skeptics” answers, therefore the result may be subject to a skewness based on the lower number of responses.

Opinions of people not using AI in their research

In this section, we summarize the answers to questions reserved for participants, who don’t incorporate AI into their research.

Why people decide not to use AI in their research

The reasons our respondents listed can be summed up into the following categories:

- No added benefit provided

- Lack of the human element and empathy

- Overlooks subtle patterns and insights

- AI providing false information and incorrect conclusions

- Lack of validity

- Security concerns and company policies

- Not being effective enough yet

We decided to include below the answers, which we believe best represent the main points:

“If used to analyse and present findings, AI represents the illusion of knowledge. It’s not “intelligent”, it doesn’t think, but simply regurgitates information based on rulesets which – and this is the important bit – it often or even usually gets wrong. Training it to get anything usable takes far longer than just doing the work yourself.

Also, and this is the even more important bit, in outsourcing the analysis, you don’t engage as deeply with the source data, so you miss the more subtle patterns and insights you would get if you just did the actual work. When we learn in school, teachers use something called “spaced repetition” to impart the knowledge: information presented at periodic intervals to force the brain to connect the dots and store it as important information. When we analyse a transcript, and then another, we create this in ourselves. We should not outsource our opportunity to learn.”

Respondent: UX Researcher, England, 10+ Years of Experience, works in Software and App development

What we found interesting about this answer was that it contradicts some of the answers, which mention the finding of patterns as one of the pros of AI use. By designating this responsibility to AI we can miss some of the patterns it cannot notice, but we would.

“User Experience is largely a human engagement. Passive AI as an assistant is the proper utilization. C-3PO doesn’t fly the Millennium Falcon, it just tells you the odds of successfully surviving an asteroid field. (Never tell me the odds!) It doesn’t do diplomacy, it translates for diplomats. Same idea.”

Respondent: UX Researcher, United States, 10+ Years of Experience, works in Software and App development

We agree with this quote, that AI should be used more for the augmentation rather than the automation of your research. It’s a tool and it should be treated as one.

“I don’t really need it, for now I don’t see the use of it but maybe in the future if I’d need help while analyzing data I’d use it. For me personally ux research is all about empathy and trying to understand the other person and so isn’t capable of doing so (at least for now 😅). The human interaction and being able to ‘look around’ not only focusing on the main goal of the research is essential and ai won’t be helpful here.”

Respondent: UX Generalist, Poland, 2-5 Years of Experience, works in Engineering and Manufacturing

The lack of the human factor as mentioned in this answer has been a frequent reason mentioned not only in this question, but also in other sections of our survey. Even our experts cited it in their answers, for example as a part of his answer to “What would be the one aspect of UX research that you think is best compatible with using AI and why?”, Joel Barr answered:

Another great point comes from Erika Hall on AI’s relevance as a buzzword, perhaps to its own detriment in the long run:

Which aspect of AI use is the most appealing for the AI non-users

Here are the ones that were mentioned multiple times:

- Transcription and analysis of interviews

- Assisting with or automating some processes

- Creation and refining of user scenarios and personas

- Quantitative research analysis

- Formulating research questions

- Reporting and presentation creation

These terms were mentioned only once or twice, but we believe they are interesting nonetheless: simulated eye tracking, assisting with storytelling, heuristic evaluation.

For example, the following quote represents well the opportunities that could a well-handled AI tool bring to freelancers and smaller UX teams:

“If there were ways to ease my workload (I am a UXR team of one) by making some processes easier for me; also quantitative research is not my strong point and I think AI could easily be incorporated into this, which I would try.”

Respondent: UX Researcher, United States, <2 Years of Experience, works in Software and App development

Another answer aligned with our general opinion on letting AI doing the mundane work for us:

“I would like to have a possibility to use prompts to transform raw data gathered in research tools into shiny pages, ready to put inside powerpoint slideshow”

Respondent: UX Designer, Poland, <2 Years of Experience, works in Automotive

The visual representation of data is easy to check and if the AI system responds well to provided prompts we can imagine this as one of the low risk – high time saving options of AI use.

Some of the participants also provided some more visionary cases:

“Solution that helps to bring multiple data sources into 1 insights dashboard view. I am working on number of multiple complex programs and i dream that in the future we can just tag insights and they are automated and analysed by AI to give us a great KPI and insight dashboard that is not just a simple data points ( maybe it builds out persona’s based on tagged content and also identifies gaps in research that should be prioritised)”

Respondent: UX researcher, Ireland, 5-10 Years of Experience, works in Engineering and Manufacturing

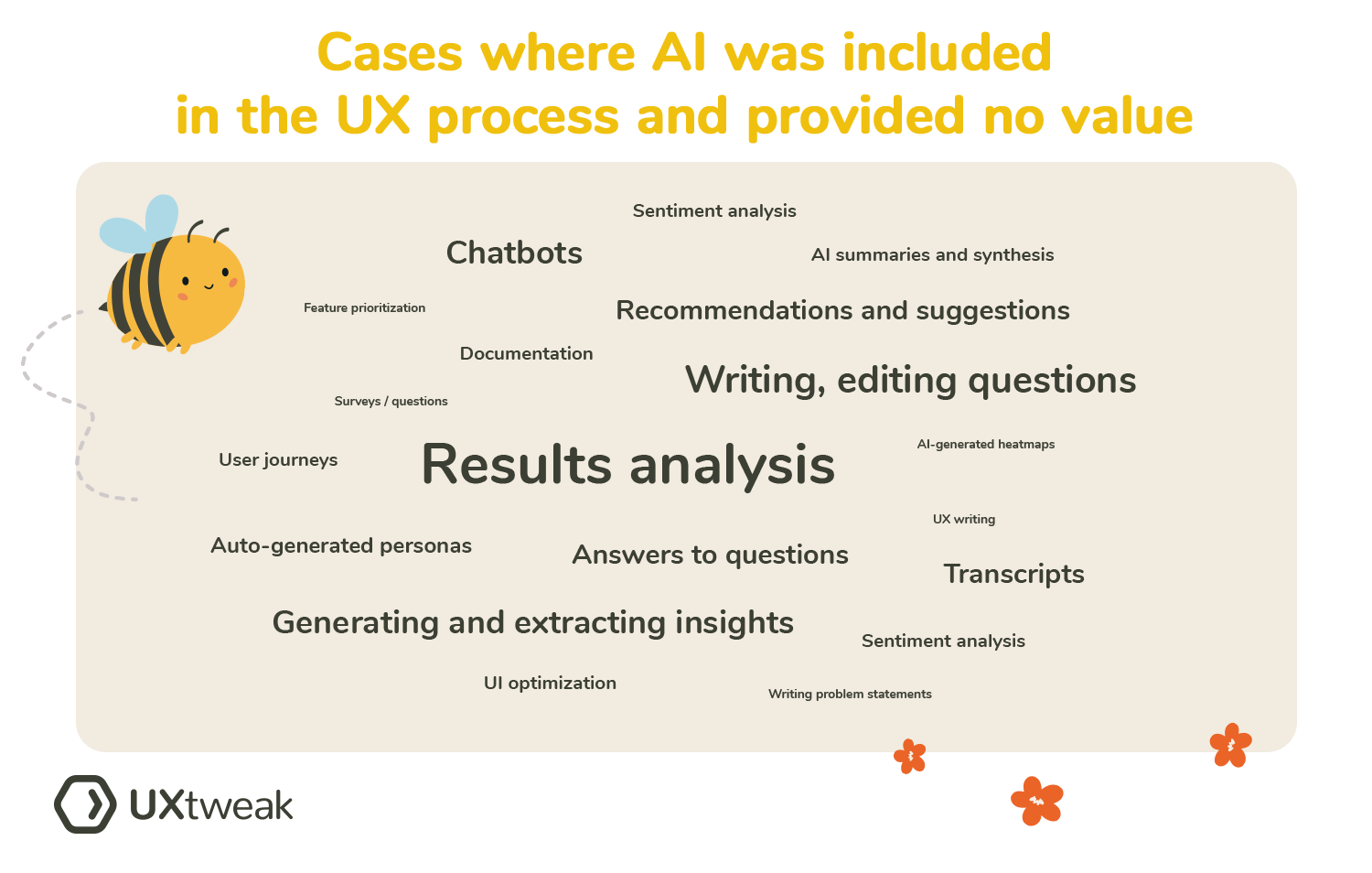

When is AI just a buzzword?

As shown in the plot, excluding respondents who did not provide an answer 65.95% (122) of respondents answered that they had met with a case where AI was included in a manner that provided little to no added value.

Additionally, we found that 25.40% (16) of respondents who answered that they have not met with a case answered in the spirit of: No, not yet.

The participants, who met with a case where AI was included as a part of the UX process and provided little to no added value, gave a description of such cases.

Top 10 most recurring instances were:

- Results analysis – analysis was incorrect, inaccurate, biased, lacked added value, had to double check the data

- Writing, editing questions and answer options for surveys and interviews – unnecessarily long, too general, didn’t fit the scenario, not well refined, useless, not interesting enough, unsatisfactory

- Generating and extracting insights – directly contradicted of many qualitative studies, ignores users needs and context, misses nuanced insights, unusable, wrong, categorized incorrectly

- Chatbots – inaccurate answers, unhelpful, not able to understand questions, wrong answers

- Transcripts – inaccurate

- Recommendations and suggestions – false, inaccurate, wrong, generic and impractical

- Answers to questions – vague, incorrect, too general, useless, biased

- Auto-generated personas – too broad, generic, creating more work than doing it manually, biased

- AI summaries and synthesis – no value, needed to redo it completely

- Documentation – required too much editing, had to be completely scrapped

Here is a word cloud of the top most mentioned cases:

For example, this quote illustrates well the fact that the increased speed of content generation can easily lead to amounts of content or questions, which in the end proves detrimental:

“Generating additional questions into a survey just because it could be easily done with ChatGPT. It made the survey unnecessarily long and has led to a higher abandonment rate.”

Respondent: UX Researcher, Slovakia, 2-5 Years of Experience, works in Software and App development

This specific answer closed up on the low quality of the shallow automated analysis:

“Plenty. Lots of these “Give us a meeting transcript/recording and we’ll pull out the findings” churn out absolute garbage analysis. They inherently take responses at face value (“User didn’t like this button. Insight: Maybe change this button to be more user friendly”) rather than having any understanding of the research questions behind the transcript, or how user context, demographics, attitudes etc. could be swaying certain aspects. Delegating the trend extraction work hurts the quality of everything as you as a UXR aren’t making the links yourself, and the AI tools just aren’t good enough to do the job for you and stand in. It’s not like pulling this data takes much time anyway.”

Respondent: UX Strategist, United Kingdom, 5-10 Years of Experience, works in Software and App development

Even in this question, there were some answers mentioning the ever present bias of AI models:

“AI is biased: Results of analysis have to be thoroughly checked, and sometimes AI (namely Chat GPT) gives results out of the blue”

Respondent: UX Researcher, Germany, 10+ Years of Experience, works in Business consultancy or management

Another key problem mentioned in the answers was that the results provided by AI were in direct conflict either with the answers from human participants or with the already established/present knowledge base:

“Yes, we used it to see if it could generate meaningful insights based on public data about our well-known and highly documented product, but the results directly contradicted what we know from conducting many qualitative studies.”

Respondent: UX Executive, United States, 10+ Years of Experience, works in Software and App development

One of the concerns mentioned by multiple participants was the questionable value and validity of the AI answers. On a positive note the AI in the role of an ideation partner seemed to be working well:

“Yes – in generating possible question answers, the answers were useless. The questions themselves that were generated were OK and helped in brainstorming the right questions to ask.”

Respondent: UX Researcher, United States, 10+ Years of Experience, works in Business consultancy or management

The use of language models and AI based responses

Usage of GPT-like language models

Similarly to the Q3, the main aim of this question was to separate the users who have used the language models in their survey based research from those who haven’t.

As can be seen on the plot 2 thirds of all our participants have used language models in their survey research. This split was more or less what we expected.

Based on the response to this question, we asked our participants different follow up questions.

Language model users

Preferred ways of using GPT-like models in survey research

The most picked option was generating questions based on prompts with 61.7% of participants selecting it. We explain it by the fact that the participants are in this case supplying the exact data that model uses to create the question as well as having the option to easily review the provided result. Simply said, we believe that this option was the one chosen most because the participants believe they have the biggest influence and control over the results.

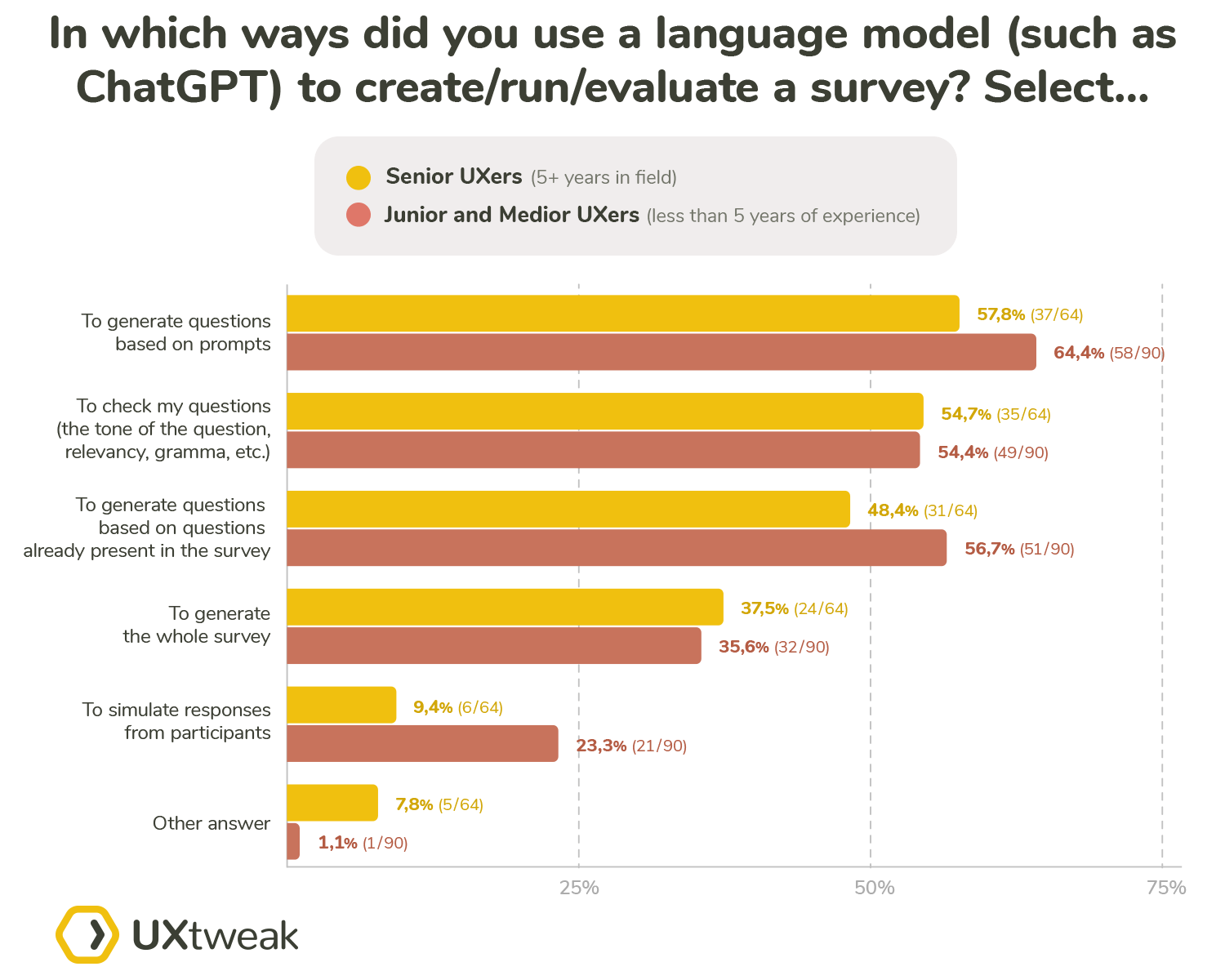

One of our aims here was to learn whether the experts in different positions and seniority levels utilize language models in different ways.

The more senior participants are significantly less likely to simulate the responses from participants with less than 10% doing so. Compared to that almost quarter (23.3%) of the junior/medior used these language models to generate responses. This can have multiple explanations. One straightforward thing may be that the seniors are simply less likely to believe the results of the AI respondents.

Alternatively, you could argue that the senior UX experts usually tackle the more complex studies for which the AI would perform significantly worse compared to the more simple studies.

The most tied results were for the checking of the questions (tone, grammar etc.) where both groups answered the same at around 54%. We explain this by the notion that this use of the language models is the most straightforward, could be considered the most “mundane” work to do, and is easy to double-check.

What we found interesting was that both groups answered similarly to using these models to generate the whole study. Here we expected the seniors to be more distrustful of the AI capabilities compared to the juniors and mediors.

As expected the seniors proved to be more conservative when it comes to generating the questions, regardless of whether the questions are generated based on prompts or questions already present in the survey.

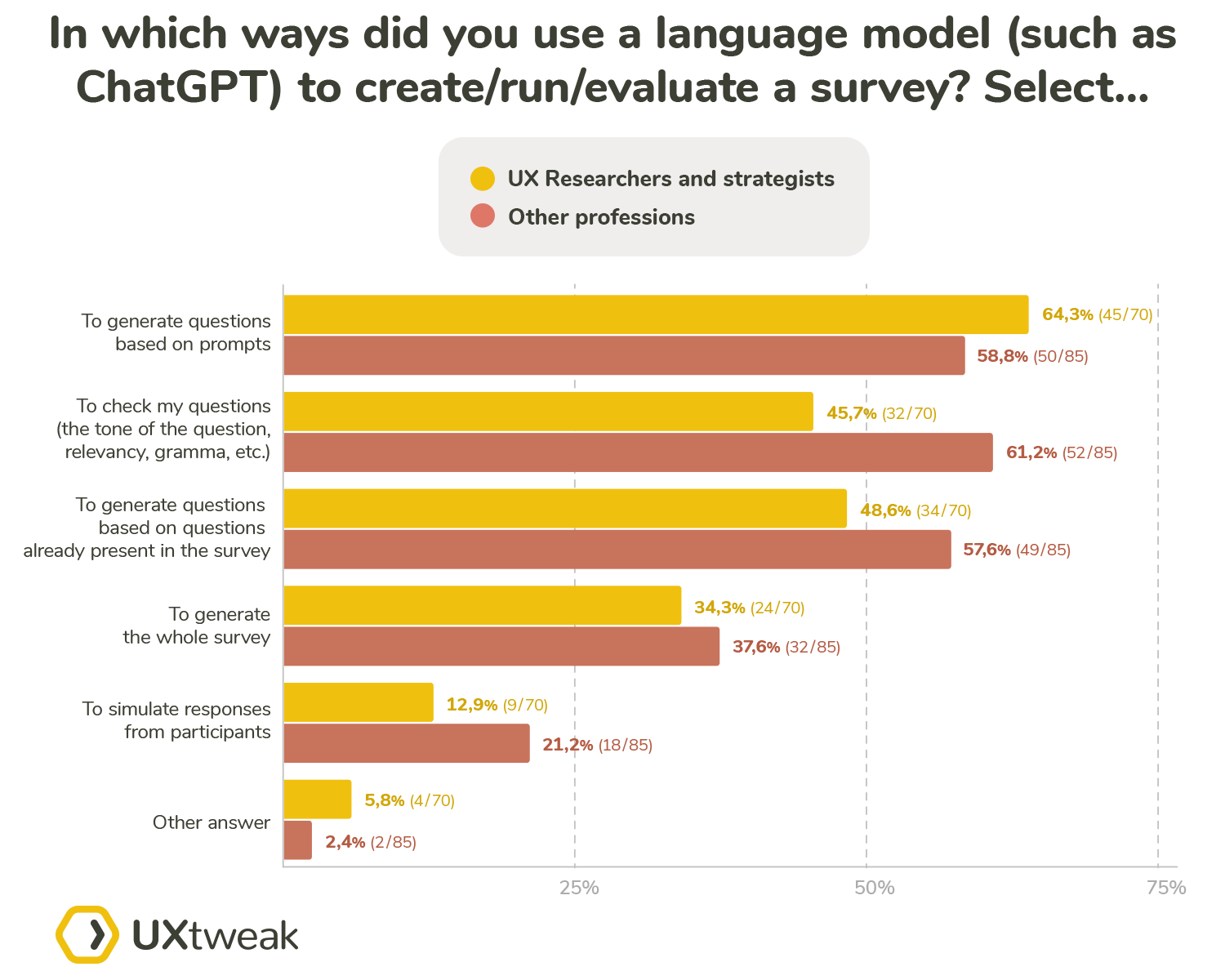

When we split the answers to researchers and the other professionals the splits were rather similar to the seniority splits. The main difference was in the checking of the questions. It seems that the researchers trust AI less in this aspect compared to the rest of the participants. Therefore the more even result in the seniority split can be attributed to senior professionals, who are neither UX researchers nor UX strategists.

Satisfaction with GPT results

With this question being a likert scale, we calculated the average score from all the participants, which landed at 5.15 based on 153 responses (not all participants saw this question). These results suggest that the participants deem the performance of these language models as rather satisfying. Almost half of the participants (74) chose the scale value 5 which supports this conclusion.

We also analyzed the results on seniority and position based splits. The trend of option 5 being the most selected option continued. The average scores didn’t differ significantly neither between the splits nor when compared to the overall result.

Language model non-users

Reasons behind not using GPT-like language models

After going through a variety of different answers, we gathered those, which came up multiple times:

- AI being a black-box and the reasons behind its outputs are not known

- Privacy (company or personal details), risk of leaks

- Generic answers

- Lack of human factor

- Enough time to manually create the survey

Some of the answers described the main problem as the questions being too generic, even cookie-cutter-like. Just to quote one of these answers:

“The same reason I didn’t ask Clippy to write my college assignments. Creating surveys is difficult and a fine art, but in swarming over it – getting the whole team involved in evaluating the questions – pulling questions apart, arguing over individual words – you think very deeply about what assumptions you are making and what you really want to find out. If I wanted something cookie cutter, I’d just take a previous one and change a few words, which is normally how I start, but just like the process of reading interview transcripts over and over for analysis embeds those insights, reading your own questions over and over helps clarify thought and improve understanding. When the product manager says “why are you asking it like this and not that?”, if you can articulate a compelling answer, you both learn something.”

Respondent: UX researcher, England, 10+ Years of Experience, works in Software and App development

A second great point made in this answer is that the process of question creation also offers many learning opportunities. If you let AI complete this process for you, you are losing all the information you could’ve learned along the way. Another problem, which we have already cited multiple times is the inherited bias of the AI models. One of the answers which touched on this subject was:

“I think language models can suffer from data bias, resulting in questions or answers that are not diverse enough or inaccurate.”

Respondent: UX researcher, United States, 5-10 Years of Experience, works in Engineering and Manufacturing

Another aspect, which showed up in the answers was time. We had different points of view in regard to this aspect. Some of the participants leaned towards this answer:

“I had enough time and information to write the survey myself.”

Respondent: UX researcher, Australia, 2-5 Years of Experience, works in Healthcare

Simply stated, the participant didn’t have the need to seek out help from an AI model. Even if a need like this arises, there is no guarantee, that consulting a GPT-like model will have any added value:

“I tried it, just to get inspired but I know how to create questions, I would spend so much time creating the correct prompt so the results are valid for me. I decided to do it on my own.

Nevertheless I knew I would need to review it and change it, so this way was less time consuming.”

Respondent: UX researcher, Czech Republic, 2-5 Years of Experience, works in Healthcare

Study types best fit for AI based users

Professionals had significantly different views on the usage of AI participants in different study types. None of the presented options was chosen by more than 55% of the participants. The scenario in which the professionals see the highest potential for AI based users were studies focused on information architecture such as card sorting or tree testing. 51.7% participants chose this option.

This can be explained by the fact that with card sort for example, if the AI used the semantic similarity of the labels on cards into account and clustered them into categories by the similarity and then inferred an appropriate name for the category, then these results could have some relevance. Similarly with the tree testing it could do the analysis of the information it is supposed to find and all the nodes on the given level, then proceed with the one which is the most similar and continue through the levels, until it finds the leaf closest to the given task text. However, it is important to note that this way you will receive only one response with no variety and even though it might be the most logical in some cases, it doesn’t mean it’s the most human, which is the answer you are actually looking for.

Despite the ever present controversy, 46.98% of participants believe that AI based responses can be used in surveys.

An important fact is that out of the 22 “Other” answers 17 were in the sentiment of none, none of the above, etc. Therefore we can deem the “Other” answer to be more or less “None”.

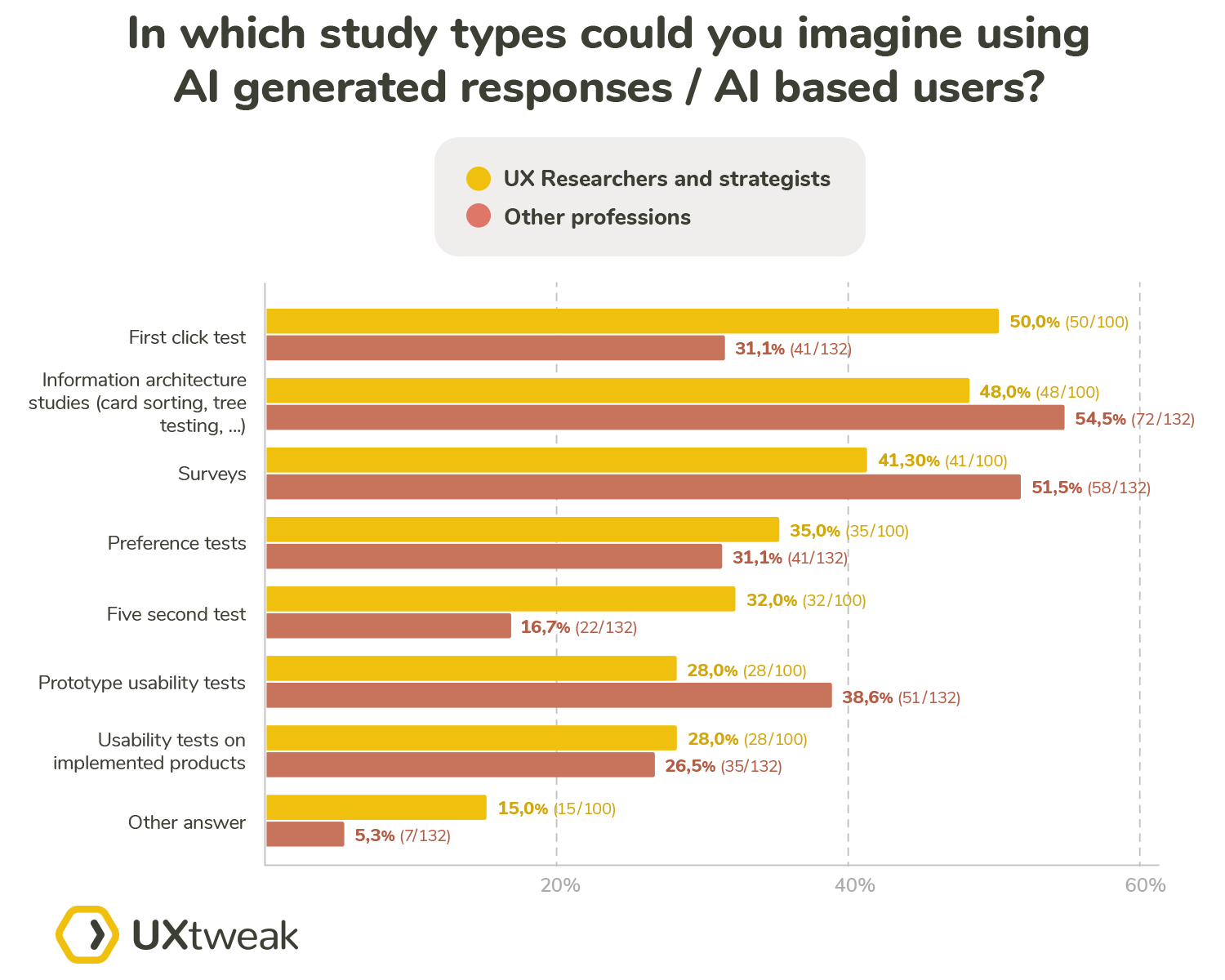

After splitting the answers by the position we can see significant differences. The biggest discrepancy between research specialists and the other professionals was in the use of surveys, prototype tests, first click tests and five second tests. In regards to surveys 40.59% of the researchers believe that AI responses can prove viable in any way compared to 51.52% agreement from the side of the other professionals.

On the other hand 49.50% of the researchers believe AI can be used in first click tests compared to only 31.06% of the other professionals. Similarly with the five second tests which were chosen by 31.68% of the researchers compared to only 16.67% of the other participants.

This could suggest that the researchers believe AI in these cases significantly more than the other participants. However, it is important to note that these two study types are less known and therefore used less, especially when compared to well established terms such as survey or usability testing, therefore it is possible that the non-researcher participants could simply not select these options because they didn’t know them.

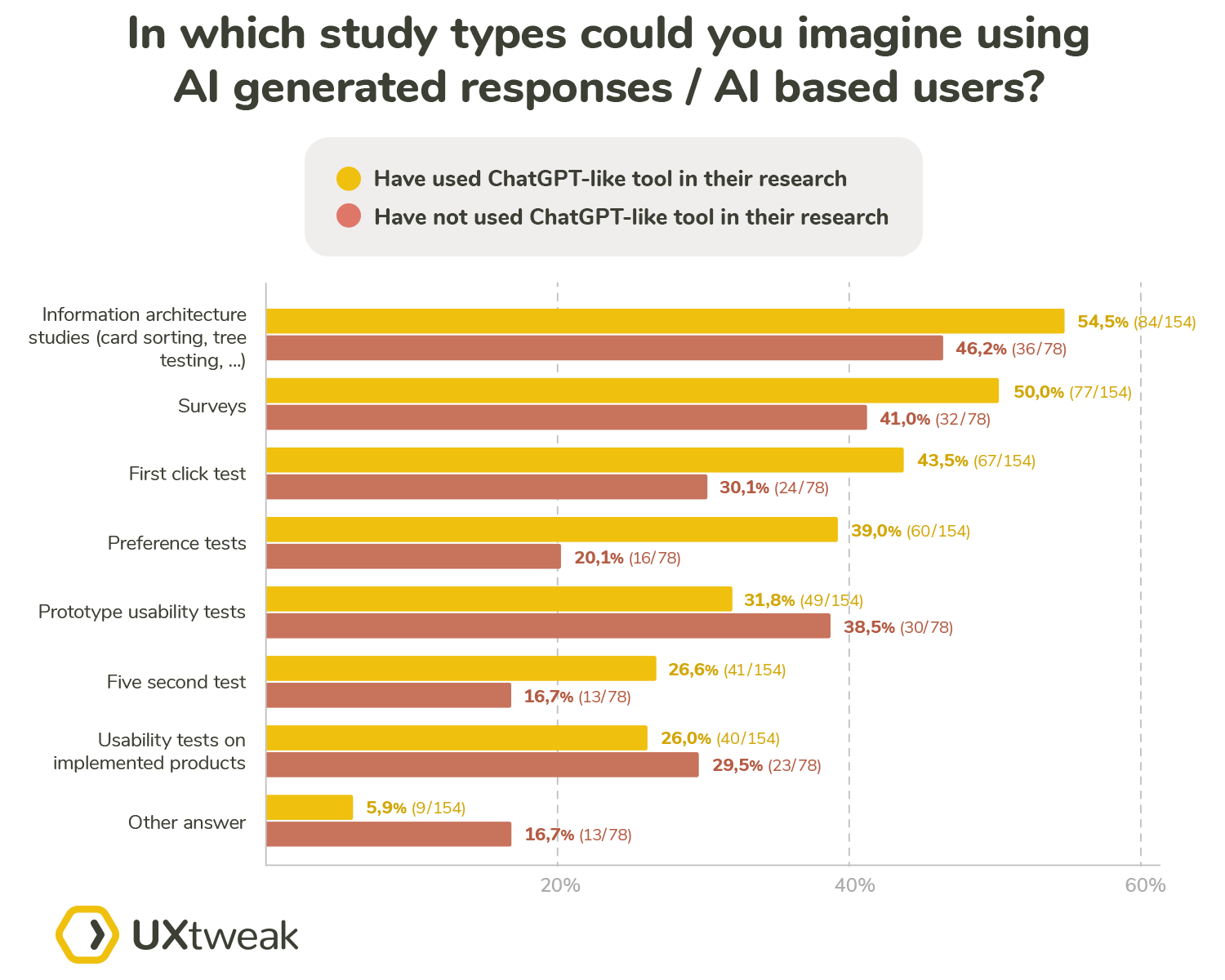

The other split we wanted to investigate closer was by the usage of GPT-like models. Since the AI based user would in most cases be based on a similar language model, we wanted to see whether the GPT-like model users would be more open to using these responses or less.

As expected the GPT-like model users selected most of the options with higher frequency. The only exceptions were the options relating to usability testing and the “Other” (“None”) option.

What are the pros and cons of using AI generated responses

Since there are two sides to every coin we asked our participants to provide their thoughts both on the positives and the negatives of using AI based responses. Even though we expected the answers to be predominantly negative, there was a significant number of responses citing considerable positives of the AI based responses. We left this question as voluntary and out of the 232 participants 190 decided to answer. Not all participants provided both pros and cons.

We have gathered the pros and cons with most references in the following table:

Pros ✅ | Cons ❌ | ||

More data in shorter time | 39 responses | Not human (emotions, quirks, neurodiversity, non-verbal cues etc.) | 85 responses |

Lower cost | 25 responses | Technical limitations | 22 responses |

Better control, consistency | 5 responses | Job loss | 7 responses |

Not only the most common responses but also all responses in general leaned more towards the cons of the AI based responses usage. The most dominant con and also the most frequent answer overall touched in some manner on the lack of uniquely human attributes and characteristics, which simply cannot be simulated by AI. Some of the specific responses in mentioning this aspect were:

“Customers often will do things that are unexpected or against their best interest. Until a AI can mimic those behaviors, it might not be accurate to overall experience.”

Respondent: UX strategist, United States, 10+ Years of Experience, works in Retail

This point amplifies the fact that AI decides based on “logical” patterns, which it learned from the previous scenarios. There are no random errors, which might simply be rooted in the personal quirks of a real participant.

“I feel that AI does not have enough emotional refinement, which often drives people to like or dislike a product or service. The more specific the target audience is, I imagine it is more difficult for the AI to be accurate in its analysis.I also believe that AI builds on existing solutions, so truly innovative solutions would be unlikely.”

Respondent: UX designer, Brazil, 2-5 Years of Experience, works in Education

Following in the same context of the missing emotional depth, this quote also addresses the learning process of the AI models, which is based on the previous or existing solutions. Since AI lacks curiosity, improvisation and intuition it is highly unlikely that it would come up with creative workarounds or alternative solutions in a way a human respondent would.

“Limited alternative bias” and “availability heuristic” are the first and biggest issue with the use of any use case of GenerativeAI that is the massive con specially when taking neurodiversity in check.”

Respondent: UX strategist, South Asia, 5-10 Years of Experience, works in Media Industry

This quote drives home the point about the human aspect. Taking neurodiversity, daltonism, vision impairment and other specific cases into consideration during your recruiting process is vital to running a truly representative UX study. It is impossible for AI to mimic these cases. It is also important to remember that AI models are biased. The bias comes both from its creators as well as from the dataset that was used for its training. The following answer addresses this point:

“AI is riddled with bias. Let me give you an example: I wanted to write a short bio based on my research. I fed it a prompt and addressed myself as Dr. (Last name) [I hold a PhD]. It assumed that I’m a male and addressed me as “he.” My preferred pronouns are “she/her.” Nothing can replace actual human users and their experience no matter how many simulations one might conduct using AI. While I will use AI to help me with my research process (asking the right research questions, framing survey questions), I will never use it as a proxy for actual users.”

Respondent: UX researcher, United States, 2-5 Years of Experience, works in Software and App development”

The final quote for the “cons” side hammers its point home:

“It absolutely should NOT be done. There is no pro to it. There is no con to it. It just shouldn’t be done. In the way that cigarettes shouldn’t be used to improve lung health and functionality.”

Respondent: UX researcher, United States, 10+ Years of Experience, works in Software and App development

For the positive sides, the answers mainly focused on the implicit benefits, such as the lower cost or the quicker access to the results:

“Virtual users generated by AI simulate large-scale user behavior, saving time and cost.”

Respondent: Product manager, Pakistan, 2-5 Years of Experience, works in Software and App development

“Quickly get a lot of quantitative data with an AI able to simulate the right target group.”

Respondent: UX researcher, Czech Republic, 2-5 Years of Experience, works in Software and App development

One of the participants looked at the difference between AI and human responses from the other perspective:

“Using AI generated responses give a direction and other options we might not have thought about. There is always a surprise to it.”

Respondent: UX researcher, Sweden, 2-5 Years of Experience, works in Software and App development

This can be true in some specific situations, however, we would still suggest double checking any of these ideas or leads with real participants as well.

We have also asked our experts a similar question in our qualitative research: What are your general thoughts on AI generated responses / AI based users? What are the biggest advantages? What should they never be used for?

In a manner that aligned with the results from our survey, the experts also took a more negative stance towards this topic. You can read all responses in our roundup, but to provide just a few:

To round it all up, we have one last quote from one of our survey participants, which we believe sums it all up:

“We are designing for people, not AIs.”

Respondent: UX designer, United States, 10+ Years of Experience, works in Defense

AI and job security

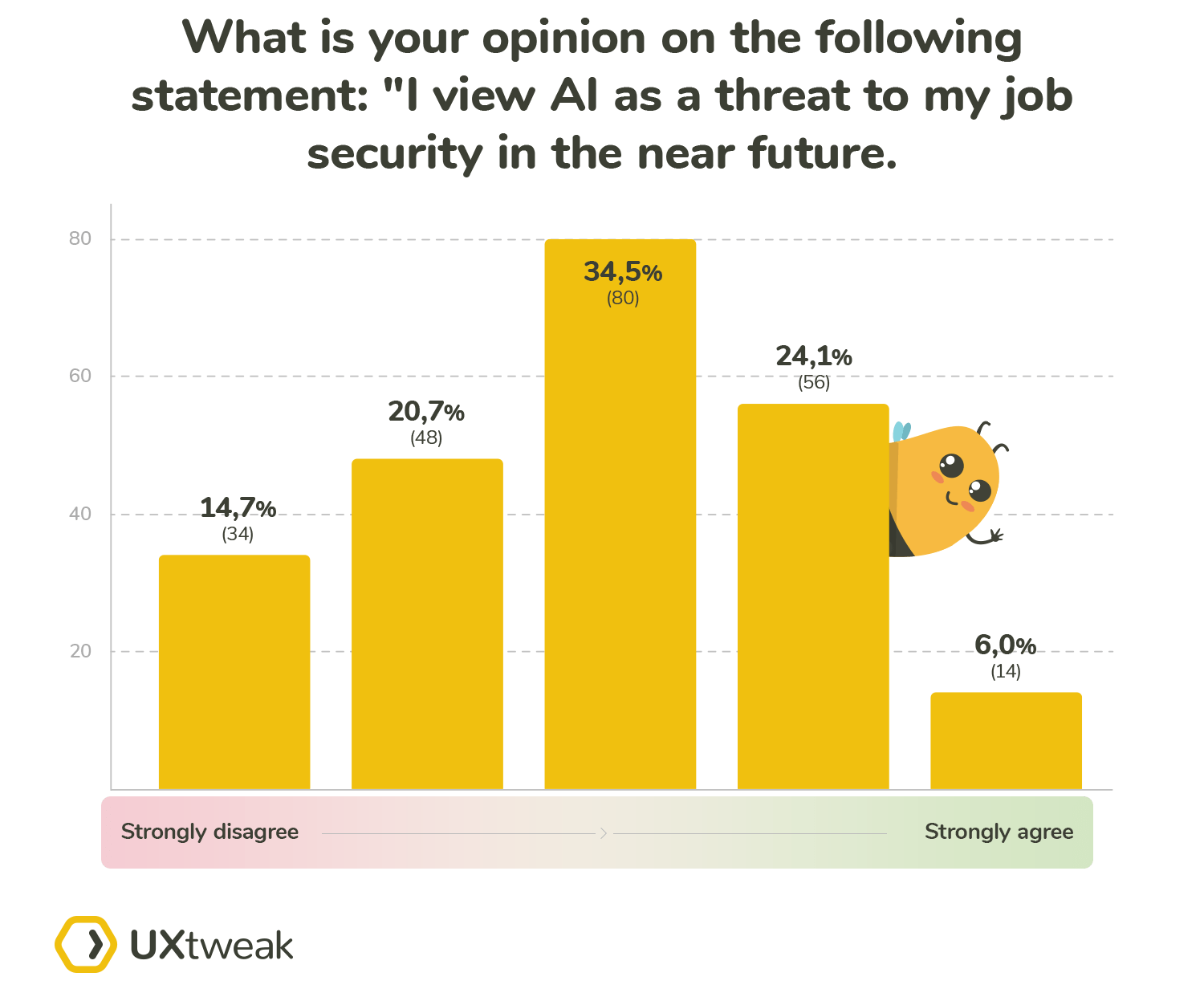

Job security is one of the aspects, which naturally comes to mind whenever AI is discussed. We separated this topic into a standalone question. In our survey, we asked our participants whether they agreed with the following statement: “I view AI as a threat to my job security in the near future”.

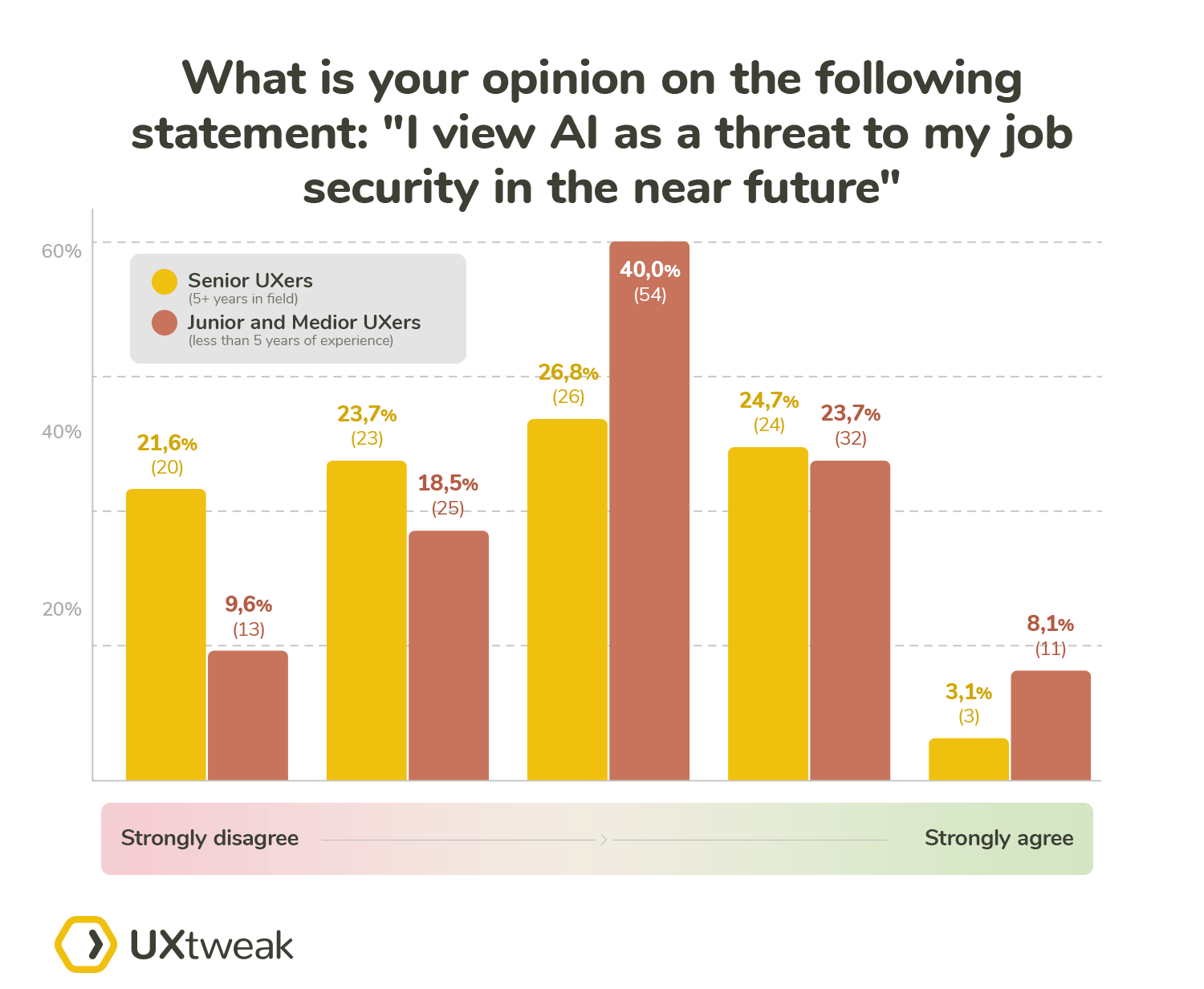

When we take all answers into consideration, we get an average score of 2.86 which suggests a neutral stance of the participants. We then decided to split the answers by the seniority of the participants in the field. We split them into two groups: less than 5 years of experience and 5+ years of experience.

The senior UXers are less concerned about losing their job to AI (an average score of 2.656 on a sample of 96 answers). Compared to that an average score for the juniors and mediors of 3.02 on a sample of 135 answers, meaning they are more concerned about their job security.

To gather additional inputs on this topic, we asked UX experts whether they think a UX researcher can remain market viable down the road if they don’t choose to adopt AI into their research process.

Some experts believe that the UXRs will remain viable even without adopting AI. One of the often cited reasons was that the AI cannot approximate the years of experience and the intuition of a seasoned UXR. To quote Debbie Levitt, MBA:

Debbie also provided a tip on how to lower your concerns about being replaced by an AI:

Additionally according to Darren Hood, MSUXD, MSIM, UXC:

And finally in the opinion of Julian Della Mattia:

Based on the answers from our survey participants and respected industry experts, we can conclude that UX seniors feel less threatened by the advent of AI, and rightfully so, since it cannot replace their unique skills and experience as of now.

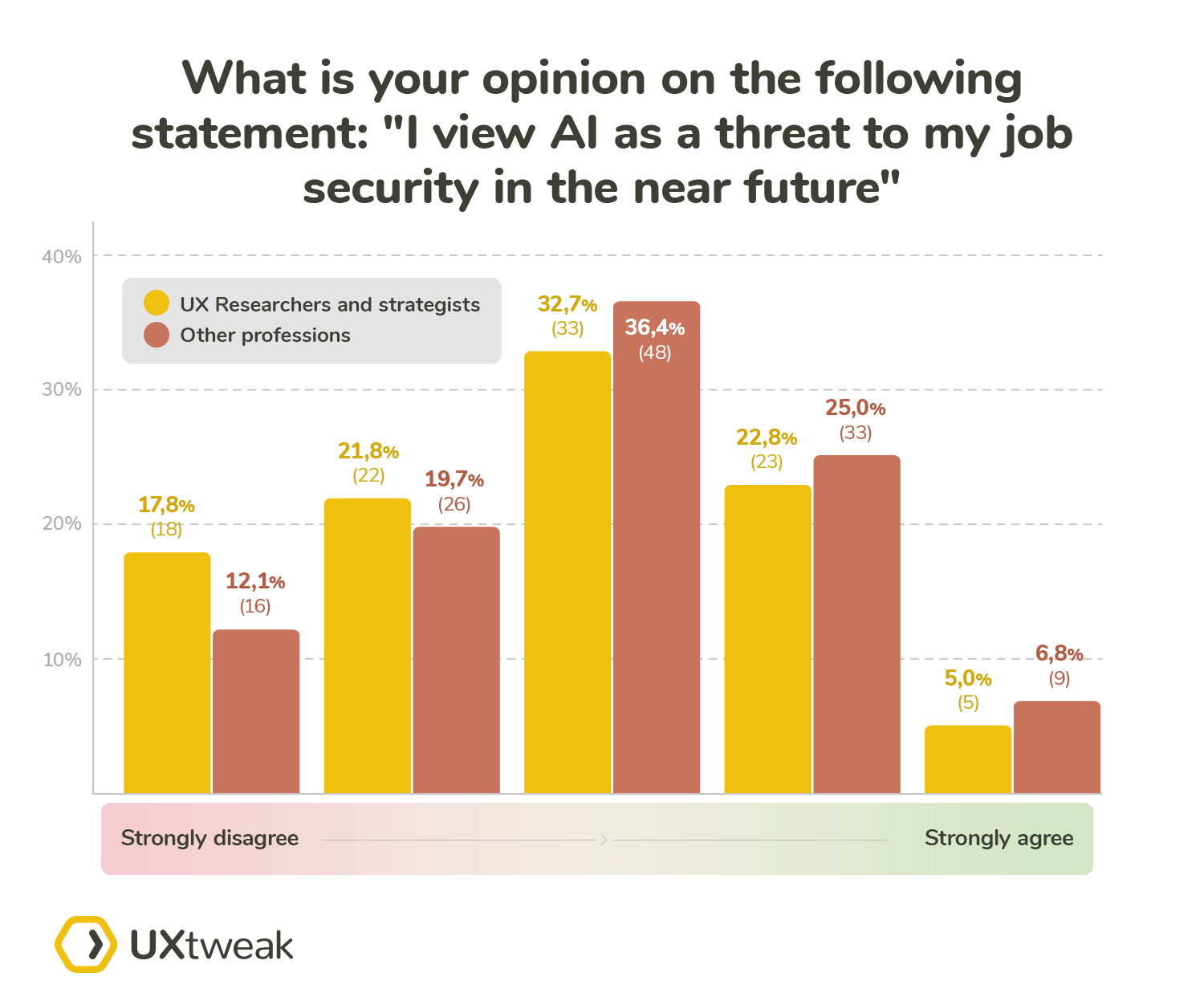

We also decided to split the results based on the job position of the participants. We separated the participants who are hands-on and involved in the day-to-day research processes (UX researchers and strategists) and compared them to the rest of the professionals.

The researchers and strategists averaged a score of 2.752 on 101 responses, suggesting a neutral stance. Compared to that, the average score of the other professionals was 2.961 based on 131 responses. The average score of other professionals is slightly higher but still remains within the borders of a neutral stance.

To sum up, based on the answers provided by the community, more senior experts and research oriented experts feel less threatened in regards to their job security when it comes to being replaced by AI.

Conclusion

When asked explicitly about their attitude towards the AI, the average answer suggested a positive attitude. In combination with other answers we can conclude that our participants are open to including AI in their research as long as the results can be easily explained, checked and the AI provides an added value.

Participants saw the biggest use for AI in cases with results, which are easy (relatively) to double check (transcripts, sentiment analysis). Also in cases that augmented or partially automated mundane tasks or the quality of the results didn’t suffer as much from the lack of the human factor.

The lack of the human factor and related attributes can, based on the responses from our participants, be without any doubt proclaimed as the biggest flaw of any AI based response. To mention some of the key aspects:

- Inherited unexplainable hidden biases rooted in the training datasets and the creators of the models

- Lack of uniquely human attributes which include, but are not limited to curiosity, intuition, creativity, improvisation and overall unpredictability.

- The inability of AI to make an “incorrect” decision or proclaim that it doesn’t know an answer, which is often supplemented by hallucinating an answer that it deems presents as correct.

- No way of representing specific user cases such as neurodivergent people or people with specific diagnoses or disabilities.

- Missing life experience of people represented by a specific persona. No way of simulating the implicit influences these experiences have on the behavior and preferences of the users.

When asked to mention the benefits of possible AI inclusion in UX research the three main options were time, money and volume. Meaning that the main potential benefit was simply described as having more data, in a shorter time for lower costs. However, the quality of the said data has always been put into question.

An often stressed point by the participants was that if there is an inclusion of AI in the research process in any way, there always has to be validation done on the results provided. Taking the results from the AI as it produces them has been strongly discouraged by many of our more senior participants and UX experts.

The majority of the participants cited using ChatGPT or a similar language model in their survey-based research. The dominant use of these models was during the setup of the survey, be it creating the questions based on prompts or helping the researcher during the ideation process (e.g. checking the tone of the question). Only a small minority of the participants claimed that they used these models to generate responses for their surveys.

Based on the aforementioned results, we believe that a significant majority of UX experts don’t need to burden themselves with the thoughts of AI replacing them. We can suggest that focusing on honing their skills and gaining additional research experience will make their position even more stable. It is also important that they keep up with the AI trends, at least on the level of not being oblivious to the possible opportunities. Improving AI-related skills such as prompt engineering or AI-assisted data analysis is never a bad idea either.

In conclusion, the advent of AI is influencing UX research is and will be taking. However, at its current state AI doesn’t have industry-wide viability and should be only considered as a tool and in no case a replacement for users or a skilled and experienced UX researcher. It is paramount that we don’t ever forget that we are creating user-centric designs and solutions, not AI-centric.