Outset is part of a growing wave of AI-powered UX research tools designed to speed up qualitative research and reduce manual analysis work.

While many teams see clear value in AI-assisted synthesis and automation, fully AI-led interviews still raise valid concerns around research quality, contextual understanding, and participant trust.

This guide explores the best Outset alternatives in 2026, including tools for AI-assisted analysis, usability testing, research repositories, and more researcher-led approaches to scaling UX research.

As Debbie Levitt explains:

“I don’t want AI asking questions, running the session, being an interviewer, etc. You see so many posts on LinkedIn about people unhappy or angry that they had to do a job interview with an AI bot. Well… then do we want to send AI bots to do user interviews? I still say no on this one. Plus this is where our main qual data comes from. If it sucks, we’ve pretty much ruined our study, and we are unlikely to show the value of research, which we’re all under pressure to show.”

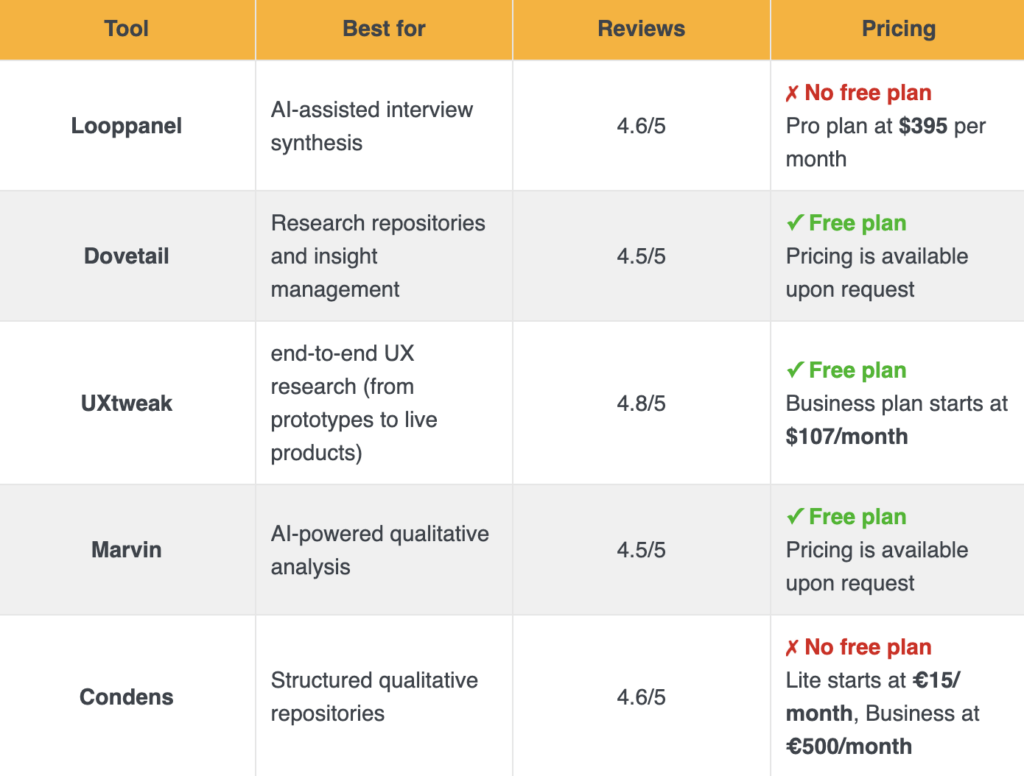

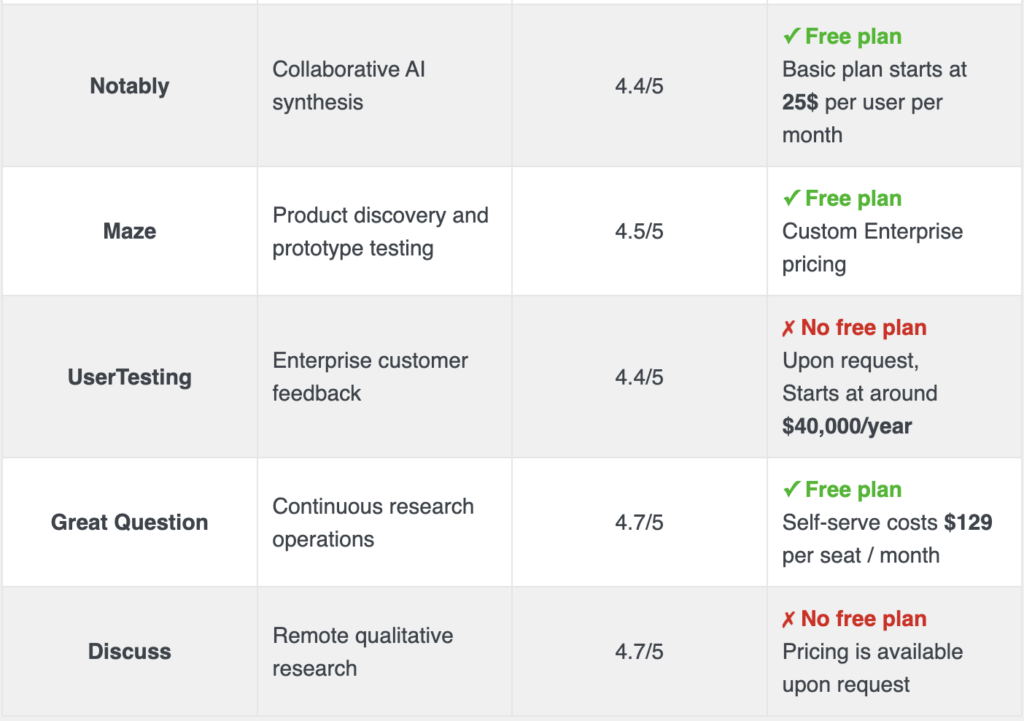

Top alternatives to Outset in 2026

Outset helps teams scale qualitative research through AI-moderated interviews and automated synthesis. While that can reduce operational effort, many UX teams still hesitate to rely heavily on AI during participant interaction.

Researchers commonly mention concerns around shallow follow-up questions, missed contextual nuance, and reduced confidence in AI-generated insights.

Another limitation is that Outset focuses primarily on AI-led interviews, while many teams also need usability testing, repository workflows, behavioral analytics, and participant management.

The tools below take different approaches to solving those challenges.

1. Looppanel

Best for: AI-powered qualitative research analysis and interview synthesis.

Looppanel is a UX research platform designed to help teams analyze user interviews quickly through automatic transcription, tagging, and AI-powered insights. It centralizes research data such as recordings, transcripts, and notes, enabling teams to identify patterns, generate themes, and share findings efficiently. Looppanel is especially suited for product and UX teams that conduct frequent interviews and need to speed up analysis workflows.

Main features

- AI transcription & analysis

- Interview recording & tagging

- Snippet and clip creation

- Research repository

- Collaboration & sharing tools

Pros

- Fast AI-powered analysis: Looppanel automatically transcribes and analyzes interviews, significantly reducing manual work.

- Easy to use: users highlight its intuitive interface and ability to extract insights without extensive setup or cleanup.

- Efficient insight sharing: snippet and clip sharing improves communication with stakeholders.

- Strong pattern detection: AI helps identify themes and trends across large volumes of qualitative data.

Cons

- Limited scope beyond interviews: primarily focused on interview analysis rather than full research lifecycle management.

- Pricing for teams: higher-tier plans can be costly for larger teams or organizations.

- Learning curve for advanced features: deeper AI workflows and tagging may take time to master.

- Less flexible for mixed-method research: not as strong for surveys or quantitative analysis.

Reviews

Based on the information provided by G2:

Overall Score – 4.6/5

Pricing

Looppanel offers a Pro plan at $395 per month, and a custom-priced Enterprise plan for larger teams that requires contacting sales.

Looppanel vs Outset

Looppanel gives researchers more direct control over moderation and participant interaction while still significantly reducing synthesis effort with AI. For many teams, that creates a more balanced workflow than fully AI-led interviews.

2. Dovetail

Best for: large-scale qualitative research repositories.

Dovetail is a widely used research repository built for organizing, tagging, and synthesizing qualitative data at scale. It combines transcription, highlights, and insight management in one place, making it easier for teams to turn interviews and notes into shareable findings. Dovetail works best for research-heavy teams that need a central system to manage large volumes of qualitative data across projects.

Main features

- Research repository

- Tagging and synthesis

- Collaboration features

Pros

- Efficient research repository: Dovetail centralizes all qualitative data – interviews, notes, and recordings – making it easy to organize, search, and retrieve insights in one place.

- AI-powered analysis: built-in AI features help summarize transcripts, identify themes, and generate insights quickly, reducing manual effort in qualitative analysis.

- Seamless collaboration: the platform enables teams to collaborate in real time, share highlights, and align on insights, improving cross-functional decision-making.

- Strong tagging & organization: flexible tagging systems and structured organization tools allow users to categorize data efficiently and uncover patterns across research projects.

Cons

- Limited quantitative capabilities: while excellent for qualitative research, Dovetail is less suited for handling quantitative data or advanced statistical analysis.

- Pricing for small teams: the cost structure may feel high for individuals or small teams with limited research needs.

- Learning curve for new users: despite its clean interface, mastering tagging systems, workflows, and analysis features can take time for beginners.

- Performance with large datasets: handling very large volumes of data (e.g., long transcripts or many files) can sometimes slow down performance or navigation.

Reviews

Based on the information provided by Capterra:

- Overall Score – 4.6/5

- Ease of Use – 4.6/5

- Customer Service – 4.7/5

- Value for money – 4.3/5

Pricing plans

Dovetail offers a free plan with core features available, along with a custom Enterprise plan for larger teams that need advanced controls, security, and scalability.

Dovetail vs Outset

Dovetail is stronger for long-term insight management and cross-team research collaboration. It works particularly well for organizations building scalable research memory across multiple products and teams.

3. UXtweak

Best for: UX researchers and UX designers (holistic website and mobile usability testing)

UXtweak is an all-in-one UX research platform designed for usability testing, information architecture research, surveys, and prototype validation. It supports full product discovery workflows, and it’s particularly strong for teams who want to combine user feedback, behavioral data, and structured research methods in one place.

Main features

- Moderated Testing & User Interviews

- Tree Testing & Online Card Sort Tool (open, closed and hybrid)

- Prototype Testing (with Invision, Figma, and Axure integrations)

- Usability testing (for websites and mobile applications)

- Preference Testsing, Five Second Testing, First Click Testing

- Session Recording & Heatmaps tool

- Survey tool

Pros

- Numerous tools for various kinds of digital assets testing – all that Maze offers and more

- According to several G2 reviews, UXtweak offers exceptional customer service and has a responsive support staff that can solve any issue in a matter of hours

- Own Database to manage your own testers (equivalent to Reach at Maze) and an onsite recruiting widget to recruit your real users

- Panel of 155M+ participants worldwide (testers from 130 countries, 2000+ profiling attributes) with advanced panel targeting criteria available in all plans

- There is a free plan with no time limit

- User-Friendly Interface

Cons

- The platform’s frequent addition of complex features may pose a challenge for users

- UXtweak note: To address this, new tutorial videos are being produced.

Reviews

Based on the information provided by Capterra:

- Overall Score – 4.8/5

- Ease of Use – 4.7/5

- Customer Service – 5/5

- Value for money – 4.8/5

Pricing plans

UXtweak offers various pricing plans, each designed to suit different testing needs, including a no-cost option for smaller projects.

Free Plan (€0/month) – a great way to experiment with UX research tools at no cost. Includes access to all tools, 15 responses/month, 1 active study, and 14-day access to results.

Business Plan (€92/month, billed annually) – ideal for teams that require essential UX research tools and features for their projects. Includes 50 responses/month (upgradable), 1 active study (upgradable), unlimited tasks per study, 12-month data retention, reports and video exports.

Custom Plan (pricing upon request) – tailored for organizations with advanced research needs, providing unlimited active studies, customizable responses, live interviews, access to a global user panel and much more.

UXtweak vs Outset

Outset focuses heavily on AI-led interviewing and synthesis. UXtweak takes a more balanced approach by supporting the parts of research where AI is genuinely useful while keeping researchers in control of moderation, interpretation, and decision-making.

For teams that care about research quality as much as research speed, that balance is often the more practical long-term approach.

4. Marvin

Best for: AI-powered qualitative research analysis and insight generation at scale.

Marvin (HeyMarvin) is a user research platform designed to help teams analyze qualitative data quickly using AI. It centralizes interviews, notes, surveys, and documents in a single repository, then uses AI to tag, summarize, and extract insights automatically. Marvin is particularly useful for UX researchers and product teams running continuous research who want to speed up analysis and reduce manual effort.

Main features

- AI-powered qualitative analysis

- Research repository & knowledge hub

- Automatic transcription & summaries

- Tagging, themes, and insight extraction

- Survey analysis & integrations

Pros

- Powerful AI analysis: Marvin significantly reduces manual work by automatically summarizing transcripts and identifying themes.

- Centralized research repository: stores interviews, notes, and documents in one searchable system.

- Efficient insight generation: helps teams uncover patterns across large volumes of qualitative data quickly.

- Good collaboration tools: enables teams to share insights and work together in real time.

Cons

- Limited end-to-end research features: lacks built-in participant recruitment or usability testing tools.

- Pricing not transparent: most paid plans require contacting sales, making costs harder to estimate upfront.

- Learning curve: AI workflows and tagging systems may take time to fully understand.

- Dependent on data quality: insights rely heavily on the quality of uploaded research data.

Reviews

Based on the information provided by Capterra:

Overall Score – 4.8/5

Ease of Use – 4.9/5

Customer Service – 4.9/5

Value for money – 4.7/5

Pricing

Marvin offers a free plan at $0, while paid plans such as Standard and Enterprise are custom-priced based on team size and usage, with pricing available only upon request and typically requiring contact with sales.

Marvin vs Outset

Marvin is more focused on AI-assisted analysis than AI-led moderation. That gives researchers greater control over how interviews are conducted and interpreted.

5. Condens

Best for: structured qualitative synthesis and research repositories built for research teams

Condens is a widely recognized tool among experienced UX researchers, designed to transform raw research into reusable insights through structured coding, synthesis boards, and evidence-backed findings. It is valued for its clarity, transparency, and flexibility across various research methods, making it particularly well-suited for teams conducting continuous research programs.

Main features

- Qualitative coding and synthesis

- Insight libraries with evidence linking

- Collaboration and stakeholder sharing

- Strong ResearchOps support

Pros

- Strong repository functionality helps create a “single source of truth” for research

- Intuitive and clean interface appreciated by many users

- Automatic transcription and qualitative analysis tools save time

- Great for collaboration and sharing insights across teams

- Fast and responsive customer support according to user reviews

Cons

- Not a full usability testing platform (no built-in participant recruitment or task-based testing)

- Limited support for quantitative research and metrics

- Transcription accuracy can sometimes require manual correction

- Tagging and organization can become complex in large projects

- Pricing may be high for larger teams or scaling organizations

Reviews

Based on 7 user reviews provided by Capterra:

Overall Score – 5.0/5

Ease of Use – 4.9/5

Customer Service – 5.0/5

Pricing plans

Condens offers a Lite Plan for €15 per month, a Business Plan starting at €500 per month, and a custom Enterprise Plan.

Condens vs Outset

Condens is a stronger fit for teams prioritizing structured synthesis and long-term research memory rather than AI-led interviewing workflows.

6. Notably

Best for: collaborative AI-assisted synthesis and qualitative analysis workflows for UX research teams

Notably helps researchers organize, tag, and synthesize qualitative data using AI-supported thematic analysis and collaborative workspaces. The platform is designed to make synthesis sessions faster and more structured while still keeping researchers directly involved in interpretation and decision-making.

It is particularly useful for teams running workshops, affinity mapping sessions, and collaborative insight generation across large volumes of qualitative data.

Main features

- AI-assisted tagging and thematic analysis

- Collaborative synthesis boards and shared workspaces

- Research repositories and insight organization

- Support for workshop-style analysis workflows

Pros

- Speeds up qualitative synthesis without fully automating interpretation

- Strong collaboration workflows for distributed research teams

- Flexible organization of notes, themes, and findings

- Easy to use for affinity mapping and thematic clustering

- Helpful for managing large volumes of qualitative data

Cons

- Less suited for usability testing or behavioral research workflows

- Limited participant recruitment and moderation capabilities

- AI-generated tagging still requires manual review and refinement

- Can become difficult to structure in very large research repositories

- Fewer advanced ResearchOps features than dedicated repository platforms

Reviews

Based on publicly available user reviews:

- Overall Score – 4.4/5

- Ease of Use – frequently praised for collaborative workflows

- Customer Support – generally rated positively by users

Pricing

Notably offers a free plan, with paid plans starting at approximately $40 per user/month. Enterprise pricing is available for larger organizations and advanced collaboration needs.

Notably vs Outset

Notably is better suited for collaborative synthesis and team analysis workflows. It supports AI-assisted organization without shifting moderation away from researchers.

7. Maze

Best for: product teams that need rapid, unmoderated prototype testing and quantitative design validation at scale

Maze is a user research platform that provides tools for conducting different types of user research, such as prototype and live website testing, surveys, interviews, card sorting, and tree testing.

Main features

- Prototype Testing

- Live Website Testing

- Surveys

- Interview Studies

- Card Sorting

- Tree Testing

Pros

- Easy integration with design tools: users appreciate how Maze integrates seamlessly with tools like Figma, making prototype testing straightforward.

- Diverse question types: the platform offers a variety of qualitative and quantitative questions.

- Effective prototype testing: Maze is highly regarded for its prototype testing capabilities, allowing quick setup and execution.

- User-friendly survey creation: Maze makes survey construction easy, offering users both control and simplicity.

- Data organization: responses can be easily converted into an Excel format, aiding in better data management and tracking.

Cons

- Learning curve: new users, especially those unfamiliar with user testing platforms, may find Maze challenging to learn and navigate.

- Prototype stability issues: users have reported frequent crashes, particularly with mobile prototypes, affecting the reliability of test results.

- Limited conditional logic: there’s a desire for more sophisticated question logic to build detailed tests without overwhelming participants.

- Report customization: users wish for more flexibility in editing and combining reports, finding the current options limiting.

- Email campaign manager: the tool is considered clunky, with emails often landing in spam folders, reducing the effectiveness of participant recruitment efforts.

- Test participant reliability: there are instances of test participants not fully committing to tests, with a notable number dropping out or exiting the test prematurely, despite compensation.

Reviews

Based on the information by Capterra:

- Overall Score – 4.5/5

- Ease of Use – 4.3/5

- Customer Service – 3.8/5

- Features – 4.2/5

Pricing plans

- Free Plan: basic features with limited functionality.

- Starter Plan: $99/month ($1,188/year).

- Organization Plan: custom pricing for larger teams with extensive research needs.

Maze vs Outset

Maze is a stronger fit for product teams validating flows, interactions, and usability metrics during design iterations. While Outset centers on AI-driven interviews, Maze focuses more on rapid behavioral validation inside product development workflows.

8. UserTesting

Best for: video-first testing

UserTesting was among the first sites for unmoderated testing. According to their data, there are more than 1.5 million testers in their panel, which usually ensures rapid responses. Product teams have access to a complex platform with a variety of choices for doing and evaluating user testing and gaining valuable insights.

Main features

- Website testing

- Mobile application testing

- Prototype testing (integrations with many design tools)

- Card sort & Tree test

- Preference test

- 5 second test

- Survey tool

- 1:1 interviews with testers

Pros

- Communication with your testers while they are on your website is made possible through controlled user testing

- A substantial sample of participants for your experiment

- Advanced targeting – one of the features that customers love the most is the ability to more precisely target their potential clients for research thanks to the vast participant pool

- Instant insights – you got all the facts as soon as you could because there were so many replies

Cons

- Weak analytical tools – users have complained that the automatic reports they provide are difficult to understand for people who aren’t scholars (e.g. shareholders)

- Limited diversity among testers – despite the platform’s excellent tester pool, several customers reported difficulty finding research subjects who spoke languages other than English.

- You must pay to bring your own testers

- Reports with problems – some users say that it is difficult to understand and assess UserTesting findings, which makes it difficult to quickly compress data

Reviews

Based on the information by Capterra:

- Overall Score – 4.5/5

- Ease of Use – 4.4/5

- Customer Service – 4.4/5

- Value for money – 4.5/5

Pricing plans

Upon request, starting at around $40,000/year. Free trial/freemium is also available upon request.

UserTesting vs Outset

UserTesting offers broader enterprise research capabilities and participant access, making it more flexible for large-scale customer feedback operations.

9. Great Question

Best for: continuous research operations and participant management for UX teams

Great Question combines participant recruitment, scheduling, research repositories, and panel management into one platform designed for continuous discovery programs. It helps research and product teams streamline operational workflows while keeping participant relationships and insights organized over time.

The platform is particularly well suited for organizations building mature ResearchOps practices and ongoing customer research programs.

Main features

- Participant recruitment and panel management

- Scheduling automation for research sessions

- Research repositories and insight tracking

- Research CRM and participant relationship workflows

Pros

- Strong participant management and recruitment workflows

- Helps centralize ResearchOps processes in one platform

- Useful for continuous discovery and longitudinal research programs

- Reduces scheduling and operational overhead for research teams

- Clean and intuitive interface according to user reviews

Cons

- Less focused on usability testing and behavioral analytics

- Limited advanced qualitative synthesis compared to repository-first tools

- Can become expensive for larger research organizations

- Some integrations and automations require higher-tier plans

- Better suited for operational workflows than deep analysis workflows

Reviews

Based on publicly available user reviews:

- Overall Score – 4.7/5

- Ease of Use – Frequently praised for operational simplicity

- Customer Support – Commonly rated highly by users

Pricing plans

Great Question offers a self-serve plan for $129 per seat/month, and a custom Enterprise plan.

Great Question vs Outset

Great Question provides stronger participant management and research operations infrastructure than Outset’s AI-first interviewing model.

10. Discuss

Best for: end-to-end qualitative research with live interviews and global collaboration.

Discuss.io is a comprehensive qualitative research platform that enables teams to run live video interviews, focus groups, and asynchronous studies in one place. It supports the full research lifecycle – from recruiting and interviewing to analysis – making it especially valuable for enterprise research teams working across global markets. The platform also incorporates AI-powered tools to speed up insight generation and synthesis.

Main features

- Live video interviews & focus groups

- Asynchronous research (self-paced studies)

- AI-powered analysis tools

- Global research capabilities (multi-language support)

- Participant and project management

Pros

- All-in-one research platform: Discuss.io supports the entire research lifecycle, reducing the need for multiple tools.

- Strong global capabilities: features like live translation and multilingual support enable research across international markets.

- High-quality video research: users highlight reliable video interviews and focus group functionality comparable to conferencing tools.

- AI-enhanced insights: built-in AI helps speed up analysis and extract insights more efficiently from qualitative data.

Cons

- Complexity for new users: the platform’s breadth of features can require time to fully learn and adopt.

- Pricing transparency: costs are not clearly listed and typically require contacting sales for tailored plans.

- Overkill for small teams: smaller teams may find it too feature-heavy compared to simpler tools.

- Occasional technical issues: some users report connectivity or usability issues during sessions.

Reviews

Based on the information provided by Capterra:

Overall Score – 4.7/5

Ease of Use – 4.8/5

Customer Service – 4.5/5

Value for money – 4.6/5

Pricing

Discuss.io offers custom pricing based on team size and needs, with plans requiring you to contact sales.

Discuss vs Outset

Discuss provides stronger support for researcher-led qualitative workflows and enterprise collaboration. While Outset focuses heavily on AI-moderated interviewing, Discuss gives teams more flexibility in how interviews are conducted while still accelerating synthesis with AI-assisted analysis.

Wrapping up

Outset represents a growing shift toward AI-led UX research workflows. For some teams, that level of automation may help research scale faster.

However, most mature UX teams still need strong human oversight during moderation, synthesis, and interpretation, especially in qualitative research. Speed matters, but research quality, context, and participant trust matter more.

That is where UXtweak truly stands out.

It helps teams scale research with AI-supported workflows while still giving researchers full control over usability testing, behavioral analysis, prototype validation, and insight generation.

If you want to make UX research faster without compromising methodological quality, UXtweak is one of the strongest Outset alternatives available today.

Try it for free today and understand how users actually navigate and experience your product. 🐝

📌 Top 3 best Outset alternatives at a glance: